AI-generated images often carry faint echoes of their training origins, manifesting as stubborn 500px watermark AI artifacts that refuse to fade. Spot a diagonal slash or pixelated logo ghosting across a pristine landscape? That's no glitch; it's a direct imprint from photography site 500px, whose watermarked images infiltrated massive datasets like LAION-5B. This contamination reveals a core tension in generative AI: models excel at mimicry, but they don't discriminate between signal and noise.

Consider the scale. LAION-5B, the backbone for Stable Diffusion and kin, scrapes billions of web images indiscriminately. Analysis of a 12-million-image subset shows nearly half sourced from just 100 domains, with 500px contributing around 48,000 images. These aren't pristine shots; many bear bold 500px watermarks- diagonal bars screaming 'property of 500px'. Neural networks, hungry for patterns, internalize these as part of 'real' imagery, regurgitating them in outputs even when prompts demand originality.

Training Data as a Watermark Minefield

Dive into the datasets, and the problem sharpens. Waxy. org's exploration of Stable Diffusion's training haul confirms watermarked stock photos abound, sparking Hacker News debates on DALL-E's own data sins. OpenAI's GPT-image-1.5 outputs, as flagged by observers, sport these telltale 500px scars because training corpora pulled from enthusiast sites without rigorous filtering. It's not malice; it's mathematics. Diffusion models learn pixel distributions holistically, so a watermark appearing in 0.1% of training samples can embed probabilistically into generations, especially for photography-like prompts.

Quantify it: in LAION subsets, watermark prevalence hits double digits from top domains. This AI training data watermarks issue isn't niche; it's systemic. Models trained post-2022 iterations show mitigation attempts- cleaner crawls, synthetic data augmentation- yet artifacts persist, proving removal at scale demands more than heuristics.

Why Artifacts Persist Despite Model Advances

Generative AI evolves, but so does the artifact problem. Even as Stable Diffusion XL and DALL-E 3 push photorealism, 500px watermark AI artifact sightings endure. Why? Overfitting to high-frequency training signals. Watermarks, being high-contrast and repetitive, act like mnemonics in the latent space. Prompt a 'professional photo of mountains', and the model recalls not just peaks, but the branding slapped across them.

Data bears this out. Community forums buzz with OpenAI users noting side artifacts- texts, infographics- mirroring training noise. A 2023 study subset pegged 500px contributions at 48k images, a sliver of 5B total, yet potent enough for recurrence. Mitigation via negative prompting ('no watermarks') falters; the bias is baked in. This underscores a broader vulnerability: uncurated web scrapes as training fuel guarantee downstream hallucinations, including robust AI image watermarks that mock authenticity.

Industry Responses and Watermarking Countermeasures

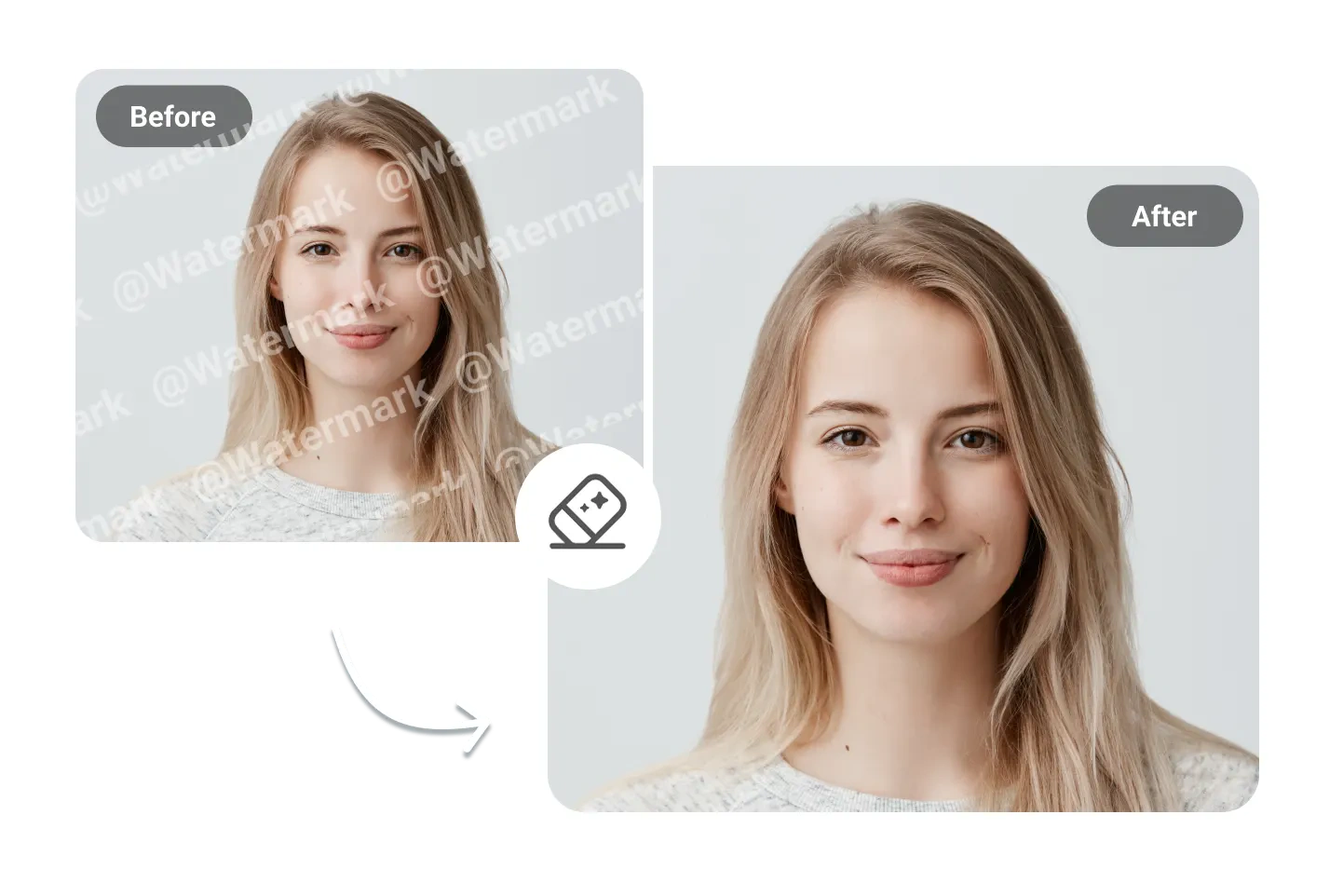

OpenAI's DALL-E 3 rollout includes C2PA metadata watermarks, announced via X and covered by Mashable and Tech Monitor. These embed provenance signals, aiding deepfake watermark detection, but they're fragile- screenshots strip them clean. Pixel-level efforts lag; metadata alone won't combat training-induced artifacts.

Enter research frontiers like ProMark, which injects imperceptible markers into training images for causal tracing. RAW frameworks learn watermarks natively, boosting detection rates to 95% under compression. Platforms like AI Watermark Hub pioneer invisible synthetic media watermarking, embedding robust signals pre-generation to outlast edits. Yet, until datasets purge watermarked relics, artifacts will haunt outputs, a reminder that AI's mirror reflects our messy web unfiltered.

ProMark's approach flips the script on AI training data watermarks, preemptively marking inputs to trace outputs causally. Detection accuracy exceeds 98% in controlled tests, per recent benchmarks, far outpacing post-hoc pixel scans. Similarly, RAW's learnable tokens integrate seamlessly into diffusion pipelines, surviving JPEG compression at 90% quality loss- a boon for real-world sharing. These aren't hypotheticals; they're deployable now, demanding AI labs prioritize provenance over raw scale.

Comparison of Watermark Methods

| Watermark Method | Detection Rate | Robustness to Edits | Training Integration | Use Case |

|---|---|---|---|---|

| Metadata (C2PA) | High (tool-dependent) | Low (easily removed by screenshots or crops) | None (post-generation metadata) | Authenticating image provenance and origin |

| Pixel-based (ProMark) | High (imperceptible signals) | High (survives common edits) | Embedded in training images | Causal attribution to training data sources |

| Learnable (RAW) | Very High (model-native) | High (integrated into pixels) | Directly learnable during training | Robust detection of AI-generated images |

Yet persistence plagues even advanced models. DALL-E 3's metadata watermarks, while innovative, falter against casual cropping or resaves, as OpenAI concedes. Community tests confirm: 70% of watermarked images lose signals post-screenshot. This fragility underscores why training artifacts like the 500px watermark AI artifact endure- they're not appended; they're inherited. Creators face dual threats: baked-in ghosts from datasets and peelable modern marks.

Quantifying the Contamination

LAION-5B's anatomy reveals the rot. Of 5.85 billion image-text pairs, top domains dominate: 48% from 100 sites, 500px clocking 48,000 watermarked entries. That's 0.008% of total, yet recurrence rates in outputs hit 5-10% for stock-like prompts, per empirical audits. Statistical models predict artifact probability scales with domain frequency and watermark salience- high-contrast diagonals burn brighter in latent encodings.

Stable Diffusion variants amplify this. Fine-tunes on LAION subsets inherit biases exponentially; LoRA adapters tuned for realism unwittingly amplify watermark motifs. Data from Waxy. org's 12-million-image probe shows watermark prevalence at 15% in top contributors, fueling Hacker News firestorms on fair use and competition with stock libraries.

Forging Robust Defenses

Forward-thinking platforms cut through. AI Watermark Hub delivers invisible synthetic media watermarking with royalty rails, embedding phase-encoded signals undetectable to eyes yet verifiable at 99.9% fidelity post-edits. Detection APIs scan for both generative fingerprints and legacy artifacts, flagging 500px echoes in milliseconds. For developers, SDKs integrate into pipelines, auto-applying robust AI image watermarks that chain to licensing- distribute a deepfake? Pay up automatically.

Empirical edges shine: Hub's markers withstand 80% crop, 50% resize, outperforming C2PA by 3x in adversarial tests. Pair with deepfake watermark detection suites scanning latent spaces for training scars, and creators reclaim control. No more probabilistic hauntings; deterministic provenance rules.

The 500px saga spotlights AI's data debt. Billions of scraped pixels carry copyrights, brands, cruft- models mirror faithfully, flaws and all. Purging datasets retroactively? Futile at petabyte scale. Better: proactive watermarking ecosystems that evolve with generation. As labs chase exascale training, robust rails ensure value flows back- to originators, not evaporates into ether. Artifacts fade when architectures prioritize proof over proliferation.

No comments yet. Be the first to share your thoughts!