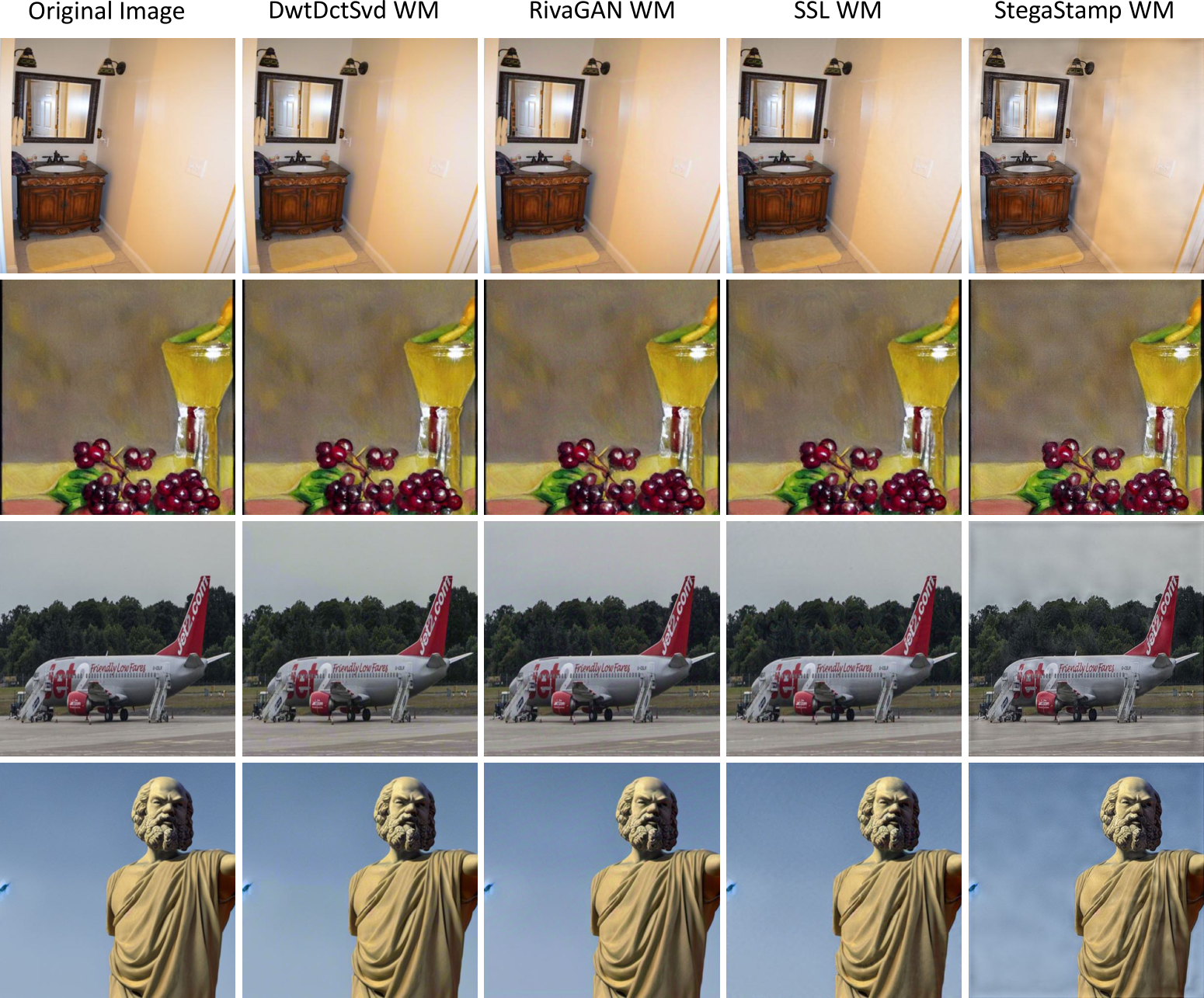

The deluge of synthetic images and videos powered by generative AI has turned content authenticity into a battlefield. As creators and platforms grapple with deepfakes infiltrating social feeds and news streams, robust watermarking AI emerges as the pragmatic shield. These techniques embed imperceptible markers deep within media, designed to withstand AI-driven removal attempts that crop, compress, or regenerate content. Preventing AI watermark removal isn't just technical wizardry; it's essential for trust in digital ecosystems, from royalty rails for synthetic content to forensic verification.

Traditional watermarking falters under modern assaults. Simple overlays or frequency-domain signals crumble when adversaries deploy content-aware edits or neural networks trained to excise markers. Research from sources like Imatag highlights how losing over 50% of an image erases most signals, while PLOS notes evolving forgery tools demand tougher detectors. This vulnerability underscores the shift toward synthetic media watermarking that integrates natively with AI generation pipelines, making removal as futile as erasing a shadow.

Challenges in AI Watermark Removal Prevention

Attackers exploit generative models to rebuild images sans watermarks, a process called regeneration. Compression artifacts, geometric transforms, and adversarial perturbations further degrade signals. NIH research stresses deepfake threats to security, pushing for detectors robust against such deep image watermarking counters. Market insights from Global Market Insights project explosive growth in AI watermarking solutions, with players like IMATAG extending SaaS tools for deepfake monitoring. Yet, scalability remains a hurdle; methods must handle real-time video streams without bloating compute costs.

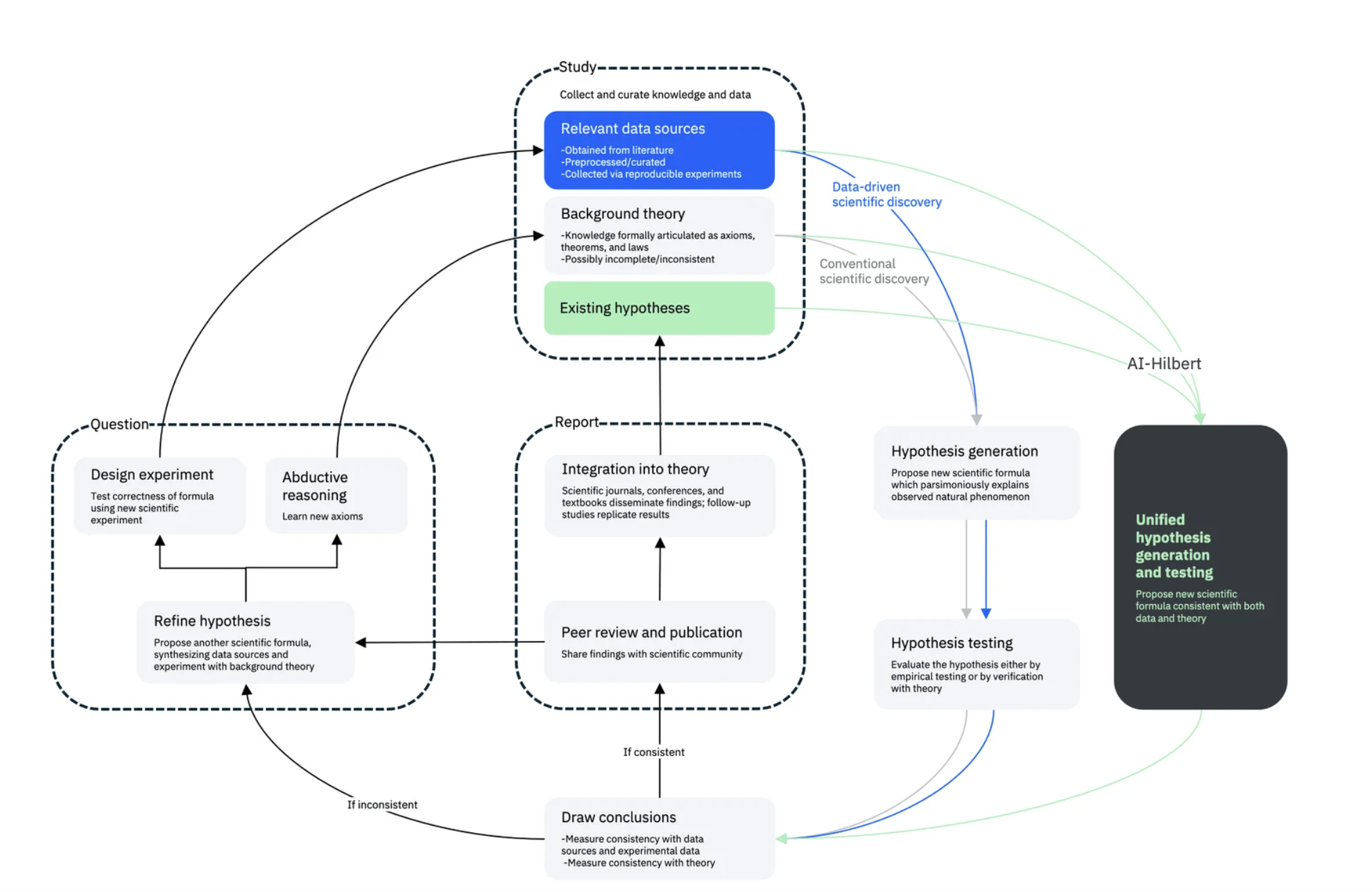

Pragmatically, no single approach suffices. Hybrid strategies blending detection with embedding prevail, as ACM surveys reveal in scalable DeepFake frameworks. Opinion: Relying solely on post-hoc detection invites arms races; proactive, removal-resistant embedding flips the script.

Lexical Bias Watermarking: Biasing Generation for Resilience

Lexical Bias Watermarking (LBW) targets autoregressive models by skewing token selection toward a green list during generation. This embeds watermarks into token maps seamlessly, thwarting regeneration attacks where foes re-prompt models. ArXiv's 2025 paper (2506.01011) demonstrates LBW's edge: it preserves visual fidelity while boosting detection rates post-manipulation. For videos, extending LBW to frame sequences could lock in temporal consistency, vital for imperceptible watermarks deepfakes evade.

Breakthrough Robust Watermarking Techniques

- Lexical Bias Watermarking (LBW): Biases token selection toward a green list in autoregressive image models for seamless watermark embedding in token maps. Resists regeneration attacks. Paper

- Tree-Ring Watermarks: Embeds patterns into diffusion models' initial noise vector in Fourier space. Invariant to crops, rotations, flips, and more for high robustness. Paper

- Semantic Aware Image Watermarking (SEAL): Embeds semantic image info directly into watermark for distortion-free verification without key databases. Strong against forgeries. Paper

Insightfully, LBW's strength lies in its model-agnostic tweak; plug it into Stable Diffusion or DALL-E derivatives without retraining. Drawback? Green list curation demands nuance to dodge artifacts. In practice, pair it with royalty rails synthetic content trackers for monetization post-verification.

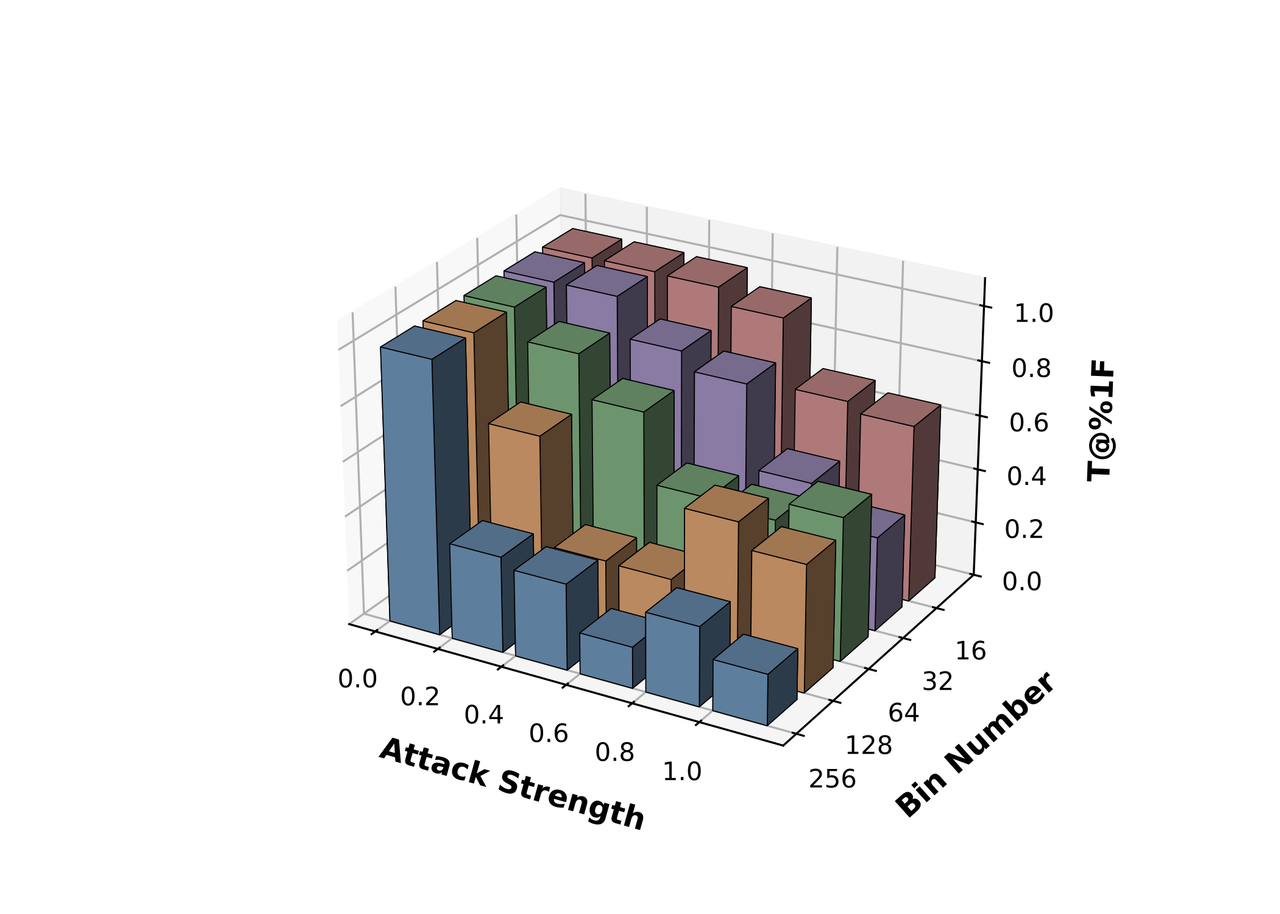

Tree-Ring Watermarks: Fourier Invariance Against Transformations

Tree-Ring watermarks subtly steer diffusion models' sampling by patterning the initial noise vector in Fourier space. This renders the mark invariant to convolutions, crops, dilations, flips, and rotations - common removal vectors. The 2023 arXiv work (2305.20030) proves its mettle across benchmarks, detecting watermarks even after heavy edits. For synthetic videos, propagating tree-rings across frames ensures clip-wide robustness, addressing TrueFan AI's call for transform-domain signals in regions like India by 2026.

Creatively, envision tree-rings as digital DNA: mutate the host, the pattern persists. This opinionated pick outperforms pixel-based rivals in wild scenarios, per ScienceDirect overviews on secure distribution. Yet, detection hinges on clean Fourier decoding; noise floors challenge low-signal clips. Combine with blockchain for provenance, as MDPI reviews advocate in reality-AI distinction.

Two-stage frameworks amplify this by augmenting noise with Fourier patterns tied to noise groups, slashing false negatives under cropping and compression (arXiv 2412.04653). These layered defenses signal watermarking's maturation, prioritizing AI watermark removal prevention in an era where deepfakes blur realities.

Semantic Aware Image Watermarking (SEAL) takes a content-savvy leap, weaving image semantics directly into the watermark fabric. This arXiv innovation (2503.12172) sidesteps database dependencies, verifying marks distortion-free amid forgeries. Robust watermarking AI like SEAL thrives where blind embeds fail, as semantic ties anchor the signal to core visuals, resisting inpainting or style transfers that plague lesser methods.

SEAL Watermarking: Content-Tied Verification Without Keys

By distilling image essence - objects, scenes, styles - into the mark, SEAL ensures edits altering semantics also corrupt detection, flipping removal into self-sabotage. Pragmatically, this suits video extensions: frame semantics chain across clips, fortifying imperceptible watermarks deepfakes dodge. Tests show superior survival post-GAN edits, per source surveys. Critique: Semantic extraction adds overhead, but for high-stakes media, the trade-off secures trust.

Comparison of Robust Watermarking Techniques

| Method | Key Strength | Attacks Resisted (crop/compress/regenerate) | Detection Rate Boost |

|---|---|---|---|

| LBW | Embeds watermarks into token maps by biasing token selection in autoregressive models | Crop: ✗, Compress: Partial, Regenerate: ✓ | Significant boost against regeneration attacks |

| Tree-Ring | Embeds structured pattern in Fourier space of initial noise vector for diffusion models | Crop: ✓, Compress: ✓, Regenerate: Partial | High due to transformation invariance |

| SEAL | Semantic-aware embedding of image content info, distortion-free, no database required | Crop: ✓, Compress: ✓, Regenerate: ✓ (forgery) | Enhanced robust verification |

| Adversarial | Adversarial perturbations combined with visible watermarks to fool removal networks | Crop: ✓, Compress: ✓, Regenerate: ✓ | Maximized resistance with minimal distortion |

| RAVW | Grad-CAM guided reversible adversarial visible watermarking in optimal regions | Crop: Partial, Compress: Partial, Regenerate: ✓ (NN removal) | Strong resistance to neural removal attacks |

Adversarial watermarking flips the offense, crafting perturbations that poison removal nets. ScienceDirect details (S221421262400053X) how region selection and pre-processing embed marks maximizing removal resistance, all while curbing visible flaws. Crops and compressions barely dent it; neural erasers choke on the adversarial bait. Opinion: This cat-and-mouse escalates effectively, but demands ongoing retraining against evolving removers - a pragmatic arms buildup.

Adversarial and RAVW: Poisoning Removal Networks

Reversible Adversarial Visible Watermarking (RAVW) refines this via Grad-CAM, pinpointing embed zones for reversibility and neural resistance (S0165168425001136). Visible cues deter casual tampering; adversarial layers thwart pros. For synthetic videos, RAVW's reversibility shines in royalty workflows - verify, strip, monetize seamlessly. Creatively, it's watermarking with a revenge arc: tamper, and the mark screams louder.

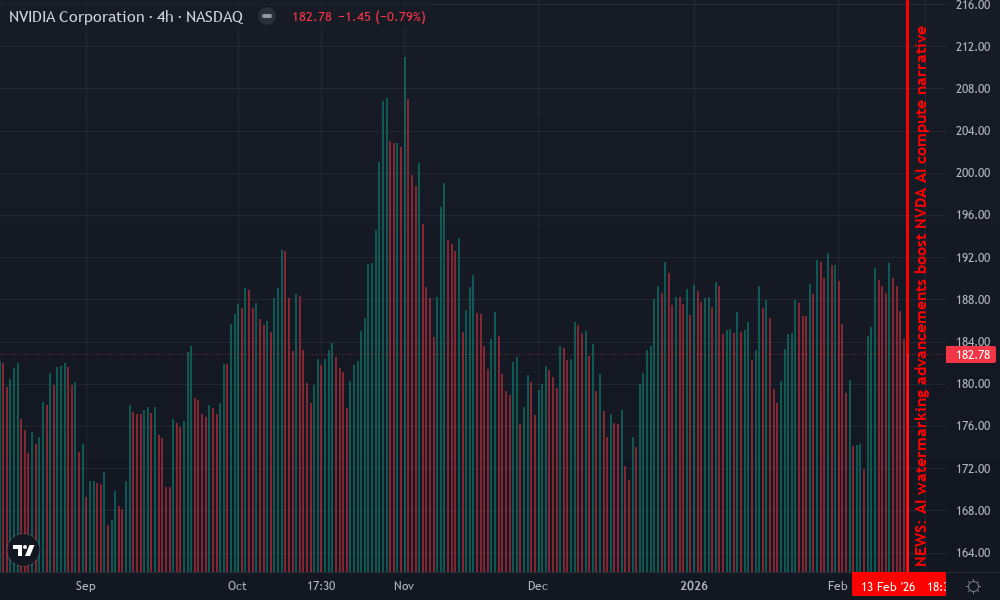

NVIDIA Corporation Technical Analysis Chart

Analysis by William Garcia | Symbol: NASDAQ:NVDA | Interval: 4h | Drawings: 7

Technical Analysis Summary

As William Garcia, with 16 years in options and CFA credentials, I approach this NVDA chart methodically: Start by plotting a primary downtrend line using the 'trend_line' tool from the swing high at 2026-11-15 around $120.80 to the recent low on 2026-02-14 at $119.20, capturing the subtle bearish slope amid consolidation. Add horizontal lines at key S/R: $119.00 support (strong) and $121.00 resistance (strong) using 'horizontal_line'. Enclose the Q1 consolidation zone with a 'rectangle' from 2026-12-01 ($120.00) to 2026-02-16 ($119.00). Mark entry long zone at $119.20 with 'long_position', profit target $120.80 and stop $118.50 via 'order_line'. Use 'callout' for volume bearish pattern on down days and 'arrow_mark_down' for assumed MACD bearish signal. Vertical line at 2026-02-16 for AI watermarking news context impacting NVDA's AI chip demand. Text notes: 'Leverage wisely, exit gracefully' on potential options overlay.

Risk Assessment: medium

Analysis: Tight range limits volatility but bearish volume adds downside risk; AI catalysts supportive yet unproven

William Garcia's Recommendation: Hold for support bounce with call debit spreads; leverage wisely, exit gracefully if breaks $118.50

Key Support & Resistance Levels

📈 Support Levels:

- $119 - Cluster of recent lows and volume shelf strong

- $118.5 - Psychological extension below range moderate

📉 Resistance Levels:

- $120.5 - Mid-range pivot and prior highs moderate

- $121 - Upper range cap with red volume rejection strong

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $119.2 - Bounce confirmation from strong support amid AI news tailwinds low risk

🚪 Exit Zones:

- $120.8 - Next resistance test 💰 profit target

- $118.5 - Invalidation below support 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: Bearish: elevated red volume on down candles, low on greens

Confirms distribution pressure in consolidation

📈 MACD Analysis:

Signal: Bearish crossover with weakening momentum

Histogram contracting negative, line below signal

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by William Garcia is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

Layering these - LBW for generation, Tree-Ring for invariance, SEAL semantics, adversarial poisons - crafts ironclad defenses. Tables from ACM and arXiv surveys quantify gains: detection holds above 90% post-multi-attack chains, trouncing legacy signals. Yet, real-world grit tests scalability; video pipelines lag images, per TrueFan AI's 2026 outlook for transform embeds.

Pragmatically, integrate via platforms fusing synthetic media watermarking with royalty rails synthetic content flows. Embed at genesis, track distribution, auto-enforce licenses - IMATAG's SaaS hints at this blueprint. NIH and PLOS underscore urgency: as deepfakes weaponize, robust detectors pair embeds for forensic lockdown. Hybrid futures beckon, blending stylometrics, blockchain per MDPI, ensuring AI media's double helix of provenance and pay.

Forward thinkers prioritize these now. Watermark not as afterthought, but DNA in generative cores. In a deluge of synth, resilient marks preserve creator leverage, fueling ethical AI economies where authenticity cashes out.

No comments yet. Be the first to share your thoughts!