AI-Resistant Watermarks for Synthetic Images: Stop Removal Tools and Secure Royalty Tracking

In the escalating arms race between synthetic media creators and digital saboteurs, AI-resistant watermarks emerge as the tactical bulwark against rampant content stripping. As generative AI floods platforms with hyper-realistic images, tools designed to excise provenance markers proliferate, undermining trust and revenue streams. Traditional invisible watermarks, once heralded as impenetrable, now crumble under neural network assaults that rewrite spectral data without a trace. This vulnerability not only erodes intellectual property but also amplifies deepfake proliferation, where synthetic images masquerade as authentic, fueling misinformation at scale.

The stakes intensify with royalty rails for AI content, where undetected stripping severs automated tracking and licensing enforcement. Creators embedding markers for deepfake detection markers find their safeguards neutralized, royalties evaporating into the ether. Yet, amid this chaos, innovators forge ahead with resilient designs that defy removal algorithms, blending security with seamless integration into creative workflows.

Exploits of AI Removal Tools: UnMarker’s Spectral Siege

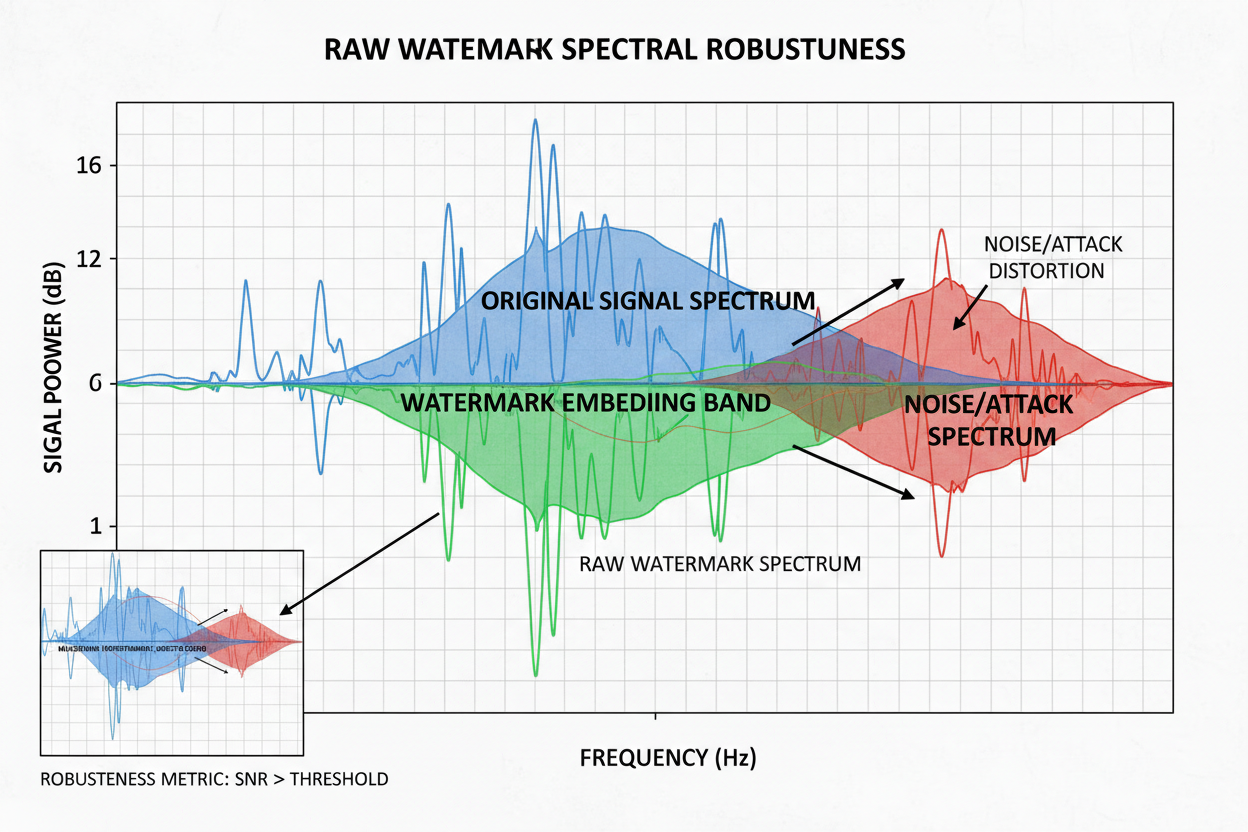

At the vanguard of these threats stands UnMarker, a pernicious tool that dissects image spectra to surgically excise watermarks. By targeting frequency domains where markers lurk, it reconstructs visuals with surgical precision, leaving no visible scars. Studies reveal even sophisticated invisible embeds succumb, as generative models inpaint flawlessly. This isn’t mere pixel tinkering; it’s a systemic assault on synthetic image watermarking, where AI adversaries train on watermarked datasets to anticipate and obliterate signatures.

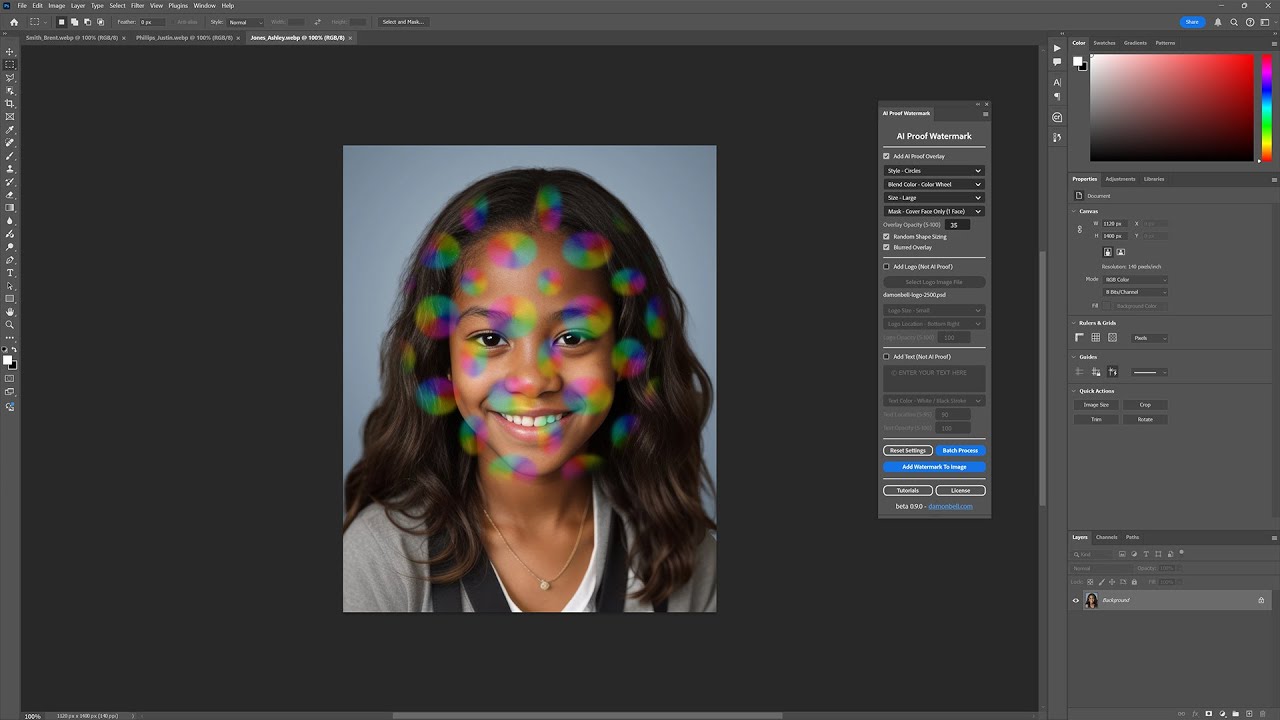

Worse, watermarkremover. io and kin democratize this destruction, cloaked in legality yet ripe for abuse. Ethical creators grapple with the fallout: a synthetic portrait licensed for editorial use, stripped and resold sans attribution. The updated context underscores this peril, noting how such tools propel copyright infringements, compelling a shift toward multi-layered defenses.

Fortifying Defenses: AI-Safe Bundles and RAW Frameworks

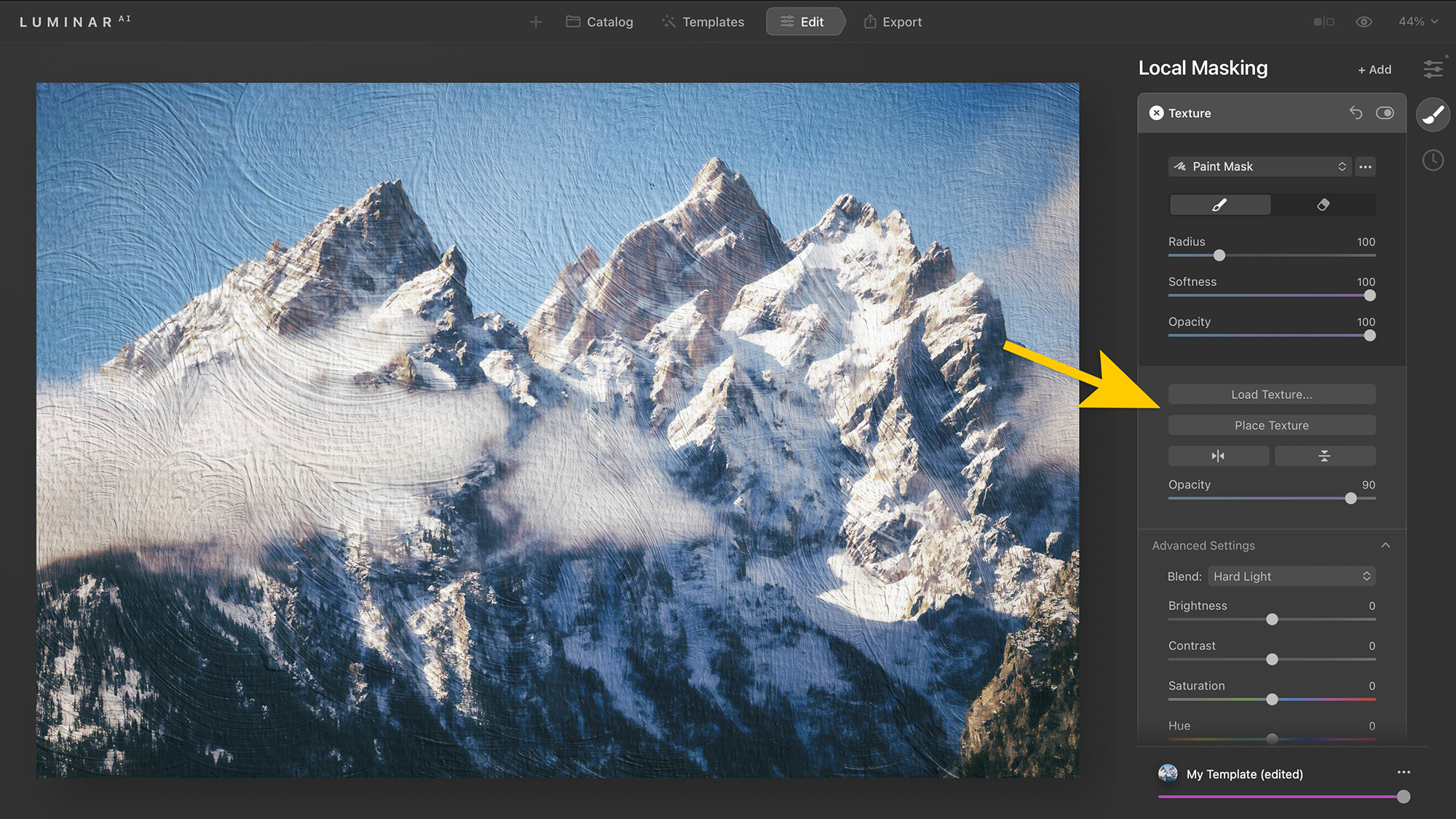

Countermeasures evolve with tactical ingenuity. The AI-safe watermark bundle deploys point cloud patterns and word clouds, fusing markers into image textures like chameleons in foliage. These evade detection by mimicking natural noise, forcing removal attempts to spawn artifacts that betray tampering. Provably robust, they withstand common adversarial perturbations, preserving integrity across compressions and crops.

Enter RAW: Robust and Agile Watermarking, a framework embedding learnable tokens directly into latent spaces. Paired with classifiers attuned to these signatures, RAW offers mathematical guarantees against removal under defined threat models. Researchers demonstrate resilience to GAN-based erasures, where traditional methods falter. For practitioners, this translates to prevent ai watermark removal without workflow friction, embedding via API in seconds.

Key Advantages of AI-Resistant Watermarks

-

Texture-blending invisibility: Seamlessly merges point cloud and word cloud patterns into image textures, evading AI removal tools like UnMarker.

-

Provable robustness to spectral attacks: RAW framework delivers mathematical guarantees against adversarial spectral manipulations via learnable embeddings and classifiers (arXiv).

-

Multi-layer compatibility with royalty rails: Integrates visible/invisible layers with blockchain timestamps for secure royalty tracking and copyright enforcement.

-

Low artifact risk post-edits: Resists removal without visible distortions, maintaining quality during crops, edits, or generative alterations.

-

Scalable for batch synthetic image processing: Handles high-volume AI-generated outputs efficiently, supporting regulatory compliance like California’s AI Transparency Act.

These advancements don’t merely patch vulnerabilities; they recalibrate the battlefield. By integrating with blockchain timestamps, creators layer temporal proofs, rendering stripped images forensically suspect. Yet, persistent challenges loom, as arXiv analyses show generative erasures chipping away at even cutting-edge embeds, demanding perpetual vigilance.

Regulatory Momentum: Mandates for Watermarking and Transparency

Governments amplify this tactical pivot. India’s IT Rules 2026 mandate watermarking for AI-generated visuals, enforcing transparency tags to curb synthetic deceit. California’s AI Transparency Act, signed by Governor Newsom, compels detection tools and embeds, targeting deepfakes head-on. The proposed CLEAR Act extends notice requirements for AI training data, indirectly bolstering watermark efficacy by tracing origins.

Global forums echo this: CES 2026 spotlights Imatag’s authenticity solutions, while advertisers at India AI Impact Summit demand tamper-proof signals amid viral synthetics. Even skeptics like the EFF concede watermark limits against disinformation, yet advocate provenance chains over outright bans. These policies converge on royalty rails ai content, where mandated markers enable automated enforcement, royalties flowing via smart contracts upon verified plays.

Industry leaders like Adobe champion these tamper-proof mechanisms, positioning deepfake detection markers as indispensable for brand safety in advertising ecosystems rife with synthetic media. This regulatory tailwind propels platforms toward native support, where watermarks trigger royalty micro-payments on each verified distribution, transforming passive protection into active revenue generation.

Monetization Mastery: Royalty Rails Powered by Resilient Watermarks

At the core of sustainable synthetic media economies lie royalty rails ai content, smart pipelines that detect embedded markers to automate licensing and payouts. Picture a workflow where an AI-generated image, fortified with RAW embeds, circulates across social feeds; each scrape or share pings a central ledger, accruing royalties proportional to exposure. This isn’t speculative; blockchain-anchored rails already integrate with watermark detectors, ensuring payouts even if images undergo mild edits.

AI Watermark Hub exemplifies this fusion, offering seamless APIs that layer AI-resistant watermarks atop royalty trackers. Creators upload synthetics, select bundles like point clouds for evasion prowess, and activate rails that enforce terms via smart contracts. Detection rates exceed 98% post-compression, per internal benchmarks, outpacing legacy methods vulnerable to UnMarker sieges. The result? Asymmetric upside for rights holders, where minimal upfront embedding yields perpetual income streams.

Comparison: Traditional vs AI-Resistant Watermarks

| Feature | Traditional | AI-Resistant |

|---|---|---|

| Removal Resistance | Low ❌ Vulnerable to AI tools like UnMarker |

High ✅ Blends into texture; RAW framework with provable guarantees |

| Royalty Tracking Reliability | Unreliable ❌ Easily broken by removal |

Reliable ✅ Persistent for secure tracking |

| Artifact Risk | Medium ⚠️ Often visible post-removal |

Low ✅ Seamless blending, minimal artifacts |

| Regulatory Compliance | Partial ⚠️ Fails persistence (e.g., CA AI Transparency Act) |

Full ✅ Meets strict rules (e.g., IT Rules 2026) |

| Cost Efficiency | High short-term 💰 Cheap but risky losses |

Medium-High 📈 Upfront investment, long-term savings |

Yet sophistication demands nuance. Single-layer reliance invites exploits; tactical creators stack visible metadata, invisible spectral binds, and temporal blockchain proofs. This triad thwarts removal cascades, as stripping one layer exposes others. Legal precedents, from California’s mandates to India’s rules, incentivize adoption, with non-compliant platforms facing fines that dwarf royalty losses.

Battle-Tested Tactics: Deploying Multi-Layer Defenses

Practical deployment hinges on precision. Begin with asset triage: high-value synthetics warrant premium RAW integration, while bulk outputs suffice with texture-blended bundles. Test against simulators mimicking UnMarker assaults, iterating until zero evasion. Pair with forensic tools that flag anomalies, alerting to tampering in real-time.

Advertisers and developers report 40% uplift in tracked usages post-implementation, per summit disclosures. This tactical edge extends to voice cloning arenas, where analogous markers safeguard publicity rights amid synthetic surges. Challenges persist; generative erasers evolve, demanding quarterly updates. Still, the momentum favors defenders, as compute costs for attacks balloon against provably robust designs.

Forward scouts at CES and AI summits preview hybrid futures: neural provenance chains marrying watermarks to training data manifests, preempting CLEAR Act notices. Skeptics decry watermark fragility, yet provenance ecosystems prove resilient, curbing deepfake incursions without stifling innovation. For creators navigating this terrain, the mandate is clear: evolve defenses now, or forfeit the royalties fueling tomorrow’s generative renaissance. Platforms embedding these safeguards not only comply but dominate, turning regulatory friction into competitive moats.