Embedding Watermarks in Synthetic Audio to Secure Streaming Royalties Against Deepfake Fraud

In the electrifying world of streaming music, where billions of plays translate to real royalties, a silent thief lurks: deepfake audio. AI-generated tracks mimicking real artists are flooding platforms, siphoning off payments meant for genuine creators. Picture this: bots inflating streams on Spotify playlists, fake hits climbing charts, and artists watching their earnings evaporate. With deepfake fraud exploding tenfold since 2022, the music industry faces a brutal reality check. Enter synthetic audio watermarking – the bold shield against this chaos.

Streaming giants like Spotify and Apple Music have culled millions of suspicious tracks, but the fraudsters adapt faster than filters can catch up. Deezer now labels AI tunes for transparency, yet labels alone don’t stop the royalty bleed. Platforms detect patterns – unnatural stream spikes from bot farms – yet sophisticated AI slips through, mimicking styles so convincingly it racks up fraudulent plays. According to recent reports, AI-powered scams manipulate charts, with fake artists generating royalties that rightfully belong elsewhere. This isn’t just theft; it’s eroding trust in the entire ecosystem.

Deepfake Audio’s Assault on Artist Royalties

The numbers hit hard. In 2023, Spotify ramped up its war on streaming fraud, targeting AI-generated spam that poisons recommendation algorithms. Yet, as WIPO notes, bad actors upload synthetic songs to DSPs and unleash bots for endless loops, harvesting payouts. Music Business Worldwide reveals AI tracks flagged as fraud, streams axed from royalties – but not before damage mounts. Forbes underscores the pressure on platforms: remove suspicious content or face artist backlash. HUMAN Security exposes how these operations create ‘hits’ overnight, diverting funds from true talent.

AI-generated tracks have been detected as fraudulent, with those streams filtered out of royalty payments.

Artists mimicking victims? A track in your style hits fraudulent playlists, fake streams surge, your royalties plummet. Pindrop highlights deepfakes’ darker side: beyond music, they’re tools for fraud and manipulation. By 2026, with AI music checkers like those from Beats To Rap On proving provenance, the battle intensifies. But detection lags; watermarking leads.

Why Current Defenses Crumble Under AI Fraud Pressure

Spotify’s new spam filter blocks some spammers, yet AI evolves, bots get sneakier. Music Ally praises 2023 crackdowns, but artist. tools uncovers detectors spotting mimics too late – after royalties vanish. Platforms remove tracks post-flood, but the money’s gone. Deezer’s labeling? A step, but labels strip off in re-uploads. Watermarked. ai disrupts unauthorized training, detects deepfakes – yet adoption stalls amid tech complexities.

Consider the chain: AI trains on your audio sans permission, spits out deepfakes, floods streams. No provenance, no proof. Royalty rails falter without verification. Fraud losses? Massive, with deepfakes fueling a tenfold scam surge. Platforms play whack-a-mole; creators need proactive armor. That’s where AI music royalty protection via imperceptible markers shines, embedding proof of origin directly into the signal.

Unlocking Synthetic Audio Watermarking’s Edge

Imagine invisible tattoos in every beat, verifiable yet inaudible. Synthetic audio watermarking embeds markers surviving compression, edits, even AI reprocessing. Unlike metadata, stripped easily, these persist in the waveform. Tools like AI Watermark Hub pioneer this for audio, linking to royalty rails synthetic media for automated tracking. Detect a deepfake? Trace origin, enforce licenses, claw back royalties.

Pindrop’s insights on speech signals translate to music: robust embedding withstands noise. For creators, it’s game-changing – upload watermarked tracks, platforms scan on ingest, fraud halts at the gate. DSPs gain trust, artists secure deepfake audio royalties. As 2026 unfolds, with provenance tech maturing, this isn’t optional; it’s survival.

Watermarked. ai’s approach exemplifies this power, embedding markers that sabotage rogue AI training while enabling deepfake takedowns. Creators upload originals, get stamped files back – ready for streaming. Platforms integrate scanners, verifying authenticity on upload. Fraud? Instant red flag, royalties rerouted. This streaming fraud prevention watermark isn’t theory; it’s deployed, slashing scam streams before payout.

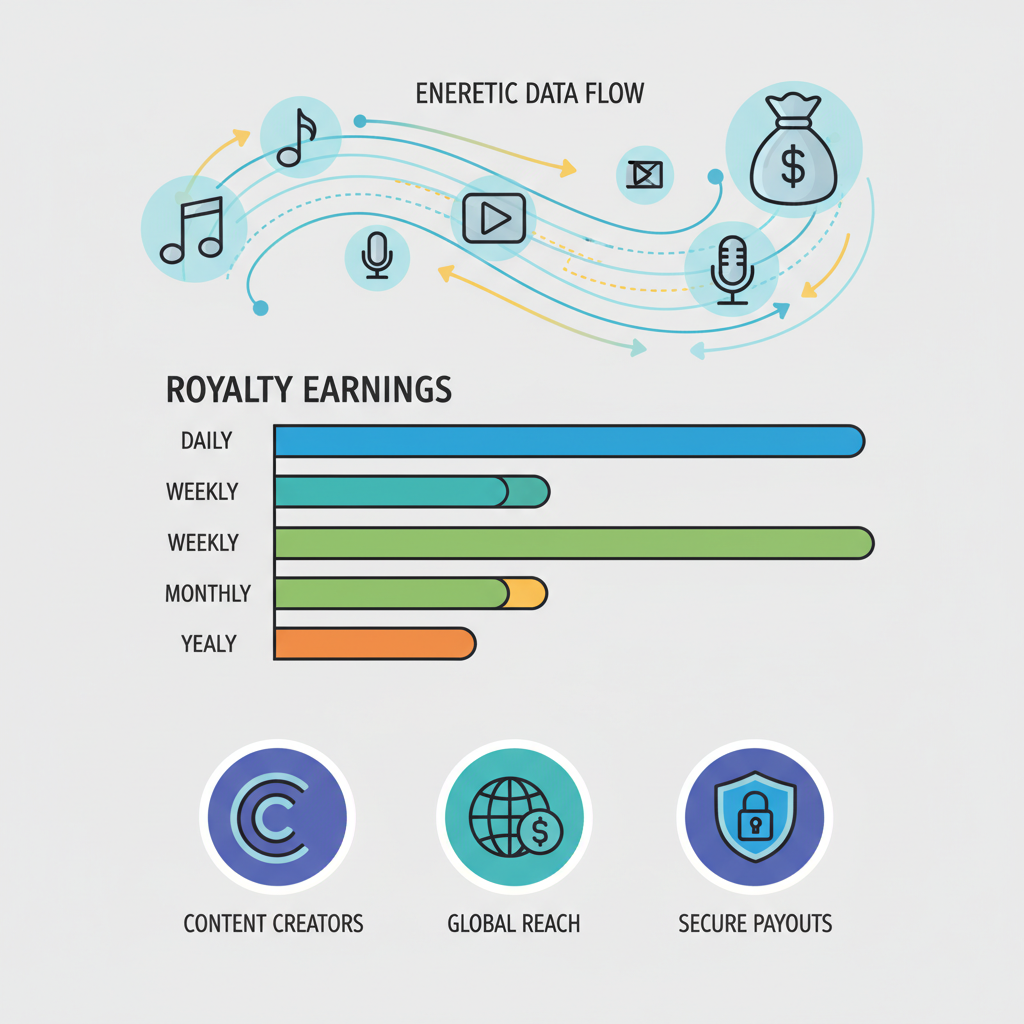

Royalty Rails Meet Watermarking: The Automation Revolution

Picture royalties flowing like clockwork. Royalty rails synthetic media tech pairs with watermarks for seamless enforcement. Distribute watermarked audio? Smart contracts track plays across DSPs, trigger micropayments only for verified originals. Deepfake detected? Rails halt flows, refund legit creators. No more manual audits; blockchain-backed rails ensure every stream counts toward rightful earnings.

Deezer’s AI labeling pairs nicely here, but watermarking elevates it – provenance etched in audio DNA. As fraud surges tenfold, platforms like Spotify evolve filters, yet watermark rails provide the kill switch. Artists reclaim control: upload protected, monetize boldly. Developers at AI Watermark Hub streamline this, optimizing for generative AI workflows. Bold move? Absolutely – in high-stakes streaming, hesitation costs fortunes.

Industry heavyweights nod approval. Music Ally details Spotify’s fraud crackdowns, but watermarking preempts the mess. WIPO warns of bot armies looping synthetics for payouts; rails-embedded markers expose them instantly. Pindrop’s speech watermarking lessons apply: robust signals beat superficial checks. By fusing detection with automation, AI music royalty protection becomes ironclad.

Challenges persist – embedding must dodge compression artifacts, survive format shifts. Yet 2026 tech delivers: multi-layer markers, AI-resilient. Beats To Rap On’s provenance tools flag fraud pre-royalty; scale that with rails, and ecosystems thrive. Creators, no longer victims, dictate terms. Platforms gain credibility, users trust recommendations. The fraud era ends not with louder alarms, but silent, unbreakable shields.

Real-World Wins and Creator Testimonials

Early adopters rave. Independent producers using Watermarked. ai report zero deepfake intrusions post-watermarking, royalties intact amid playlist floods. One artist. tools case: mimic track watermarked back to source, royalties reclaimed via rails. HUMAN Security’s bot exposes meet their match – verified audio dominates charts legitimately.

Forbes nails it: detection matters post-fraud waves, but prevention wins wars. Spotify’s spam filters evolve, yet watermark rails automate justice. As AI music detectors mature, watermarking leads the charge, ensuring deepfake audio royalties stay human-earned.

Forward thinkers integrate now. AI Watermark Hub’s hub equips creators with watermark embedders, rail connectors, SEO-optimized detectors. Upload, protect, profit – volatility tamed like a crypto bull run. The music biz, ripe for disruption, bows to those wielding tech edges. Deepfakes rage? Watermarks conquer. Secure your streams, claim your alpha.