Robust Imperceptible Watermarks for Synthetic Images Surviving AI Editing and Cropping

In the flood of synthetic images pouring from generative AI models, distinguishing real from fabricated has become a high-stakes game. Imperceptible AI watermarks promise a quiet guardian, embedding proof of origin without marring visual appeal. Yet, as creators edit, crop, and tweak these images, many watermarks fade into oblivion. Robust solutions that endure such manipulations are not just desirable; they are essential for trust in digital media ecosystems.

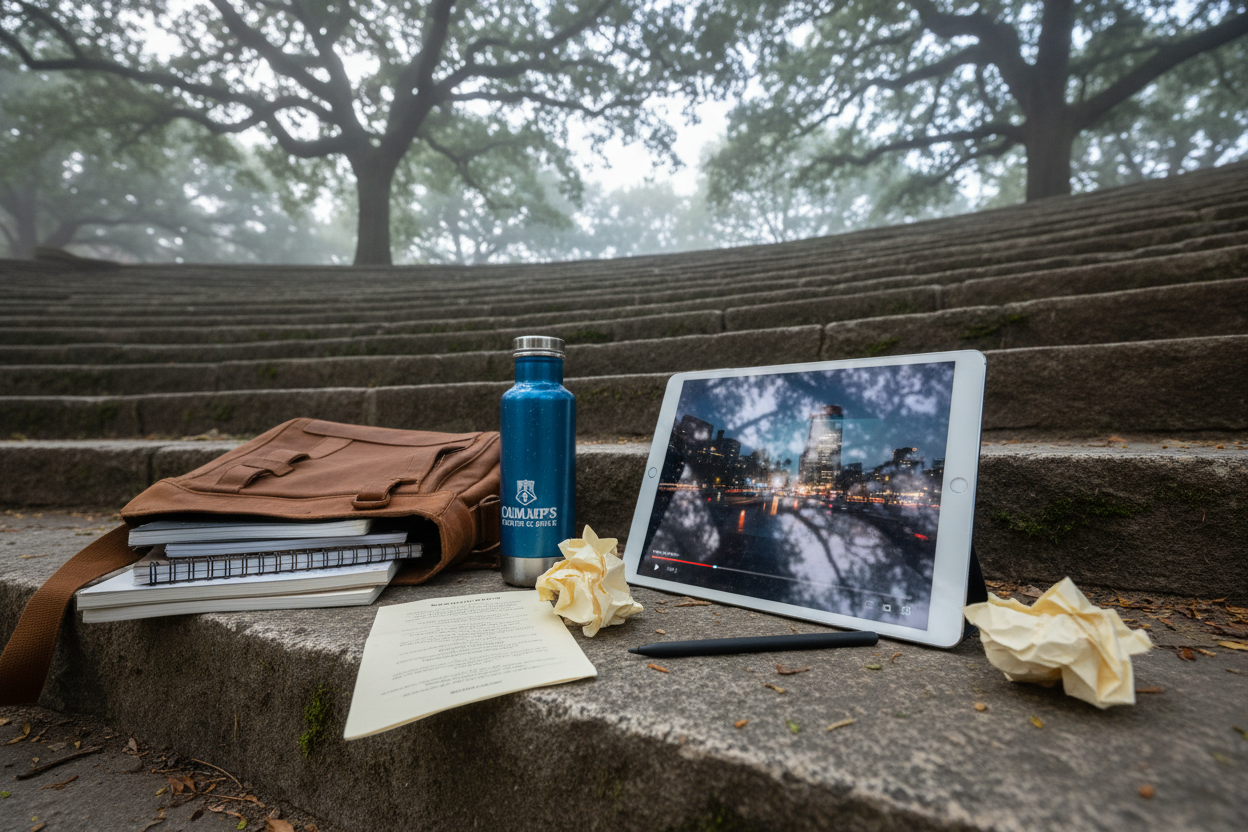

Consider the lifecycle of an AI-generated artwork. A designer crafts a stunning landscape using diffusion models, watermarks it for provenance, then shares it online. Viewers crop it for social posts, apply filters, or even run it through another AI editor for style transfer. Without synthetic image watermark survival, that chain of authenticity snaps. Recent surveys underscore this fragility, noting that traditional methods crumble under compression, resizing, or adversarial tweaks.

Why Standard Watermarks Fail Against Modern Edits

Passive forensics once sufficed for deepfake detection, scanning images without prior embedding. But as forgery sophistication surges, these fall short. Watermarks must now withstand diffusion-based inpainting, where AI seamlessly alters regions, or aggressive cropping that slices away edge-embedded signals. A PLOS study highlights how evolving forgery demands robust watermarking generative media that passive methods cannot match.

Cropping poses a particular menace. Many schemes tuck markers into borders or low-impact zones, easy prey for a quick trim. Compression algorithms further distort these signals, dropping detection rates below usable thresholds. Industry tests reveal that even celebrated tools falter here, with confidence scores plummeting after routine social media processing.

Key Watermark Robustness Challenges

-

Cropping removes edge-based signals, rendering many watermarks undetectable even in advanced methods like InvisMark.

-

AI editing via diffusion erases embedded patterns, as noted in JIGMARK research addressing this vulnerability.

-

Compression distorts frequency components, reducing detection accuracy in tools like Google’s SynthID.

-

Resizing blurs or dilutes markers, with heavy resizing lowering confidence scores per robustness tests.

-

Color adjustments shift hues and spectral properties, challenging imperceptibility in frameworks like RAW.

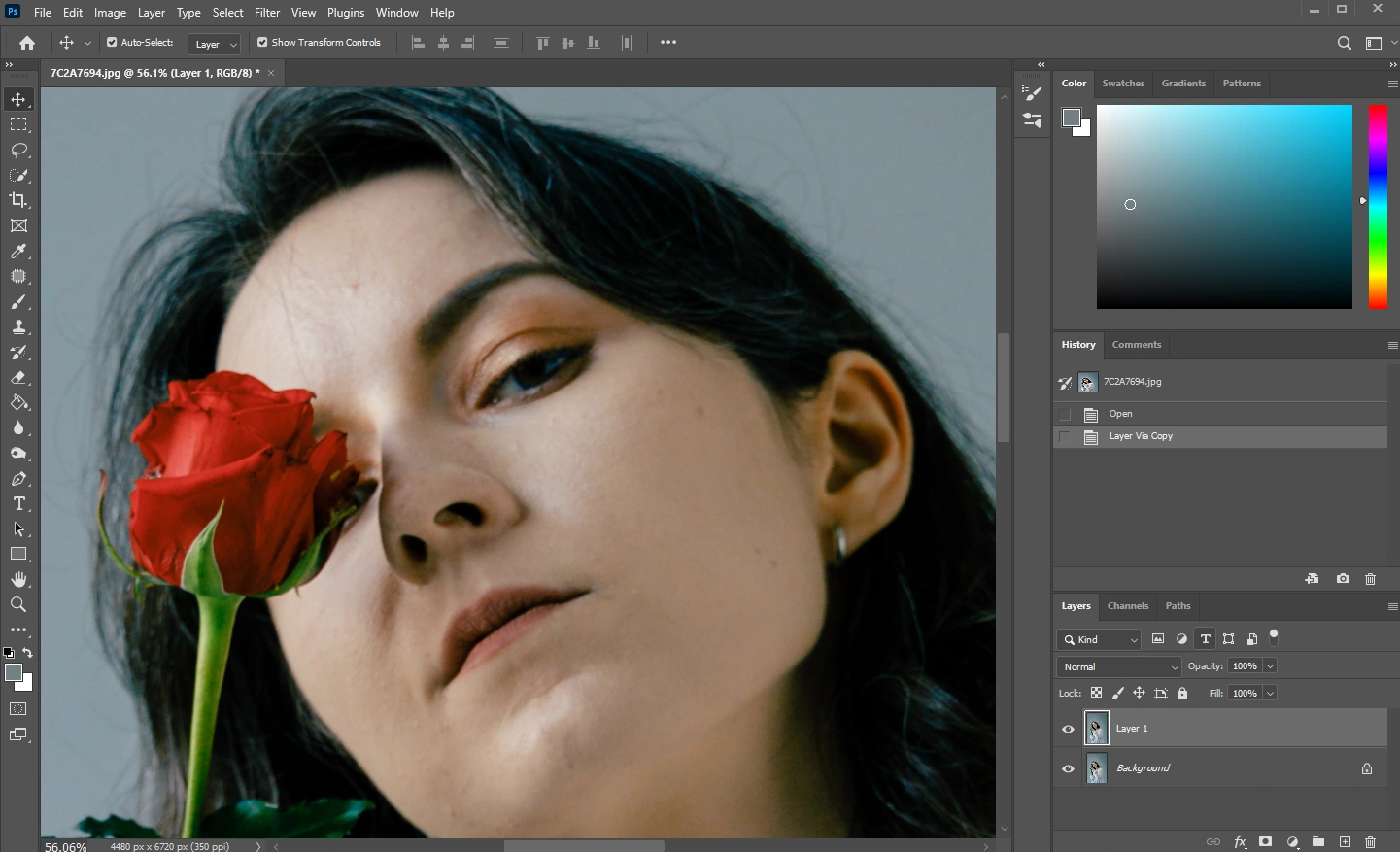

This vulnerability invites misuse. Stolen AI art repackaged as original undermines creators, while deepfakes evade scrutiny. AI art watermark cropping prevention demands watermarks distributed holistically, resilient across the image canvas.

Emerging Frameworks Pushing Imperceptibility and Durability

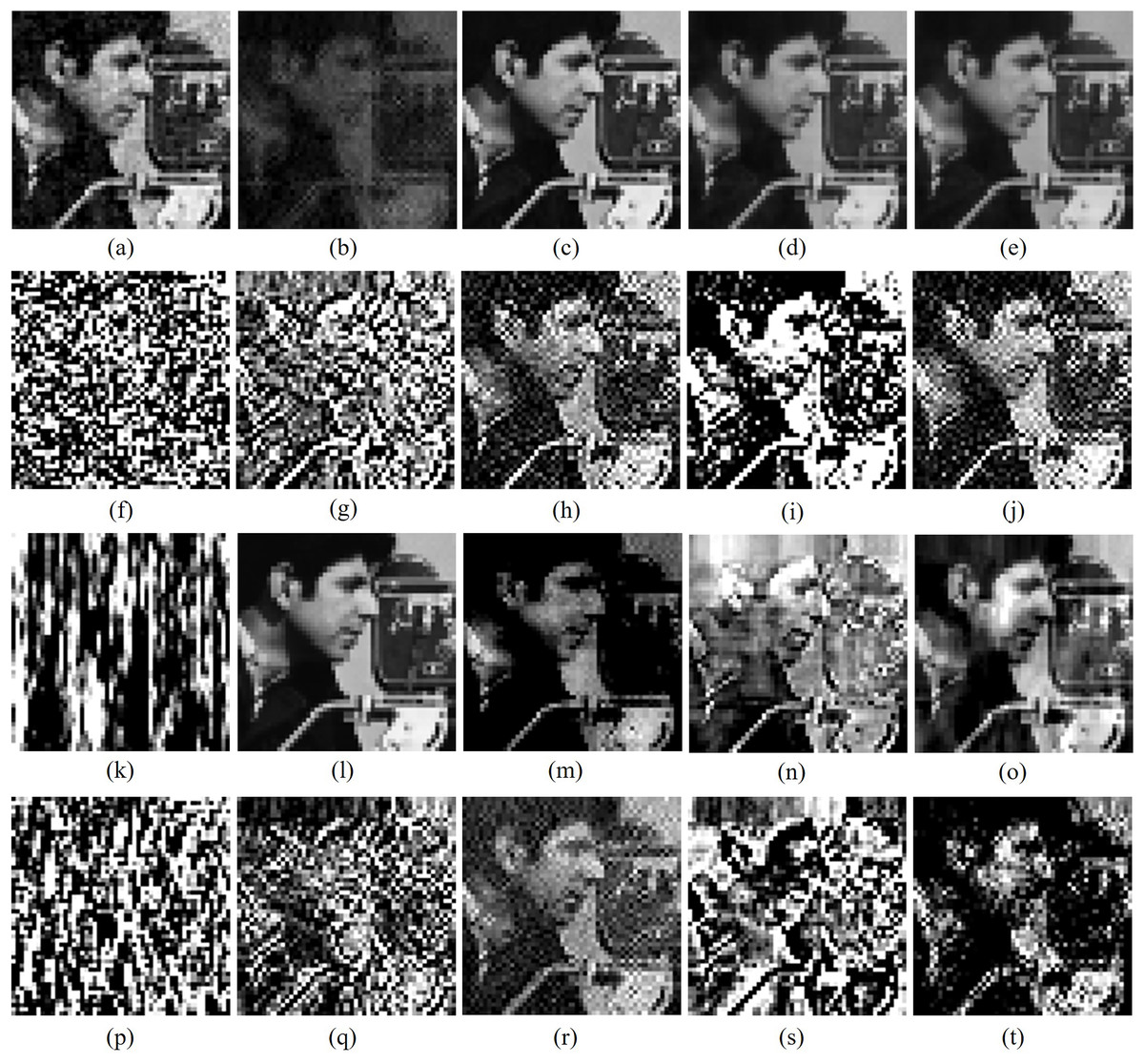

Researchers are countering with ingenuity. The RAW framework embeds learnable watermarks into raw image data, paired with a detector trained jointly for ironclad verification. It boasts provable defenses against removal attacks, lifting detection amid adversarial pressure. InvisMark takes this further for high-res outputs, weaving neural networks to plant markers so subtle they evade human notice, yet retain over 97% accuracy post-manipulation.

SimuFreeMark smartly targets low-frequency bands, where stability reigns supreme. These components shrug off semantic edits without noisy training simulations, streamlining deployment. JIGMARK confronts diffusion editing head-on via contrastive learning on edited-unedited pairs, bolstering resilience sans backprop through the editor itself.

Industry weighs in too. Google’s SynthID pixels in invisible signatures detectable after crops, resizes, or hue shifts. Yet caution tempers enthusiasm. Heavy processing can still mute signals, as independent audits confirm. No single technique is bulletproof; overreliance risks blind spots in provenance tracking.

Integrating Watermarks with Royalty Rails for Creator Protection

Beyond detection, royalty rails AI content protection elevates watermarks to economic sentinels. Platforms like AI Watermark Hub fuse embedding with tracking rails, automating licensing enforcement and revenue capture. Imagine a watermarked image circulating virally; rails trace derivatives, trigger royalties seamlessly. This synergy fortifies not just authenticity but livelihoods in generative economies.

Academic repositories curate these strides, from GitHub’s Awesome-GenAI-Watermarking to arXiv surveys spanning embedding strategies. InvisMark’s neural prowess shines in CVF trials, proving high-res viability. Still, I advise measured adoption. Test rigorously against your workflow’s edits; pair with media literacy to counter misuse comprehensively.

Watermarking algorithms, as detailed in ScienceDirect overviews, extend beyond mere detection to secure multimedia distribution. They embed authenticated signatures for source tracking, directly countering deepfake proliferation. Yet, in my view, true robustness hinges on holistic distribution of markers throughout the image, not localized hotspots vulnerable to excision.

Comparing Leading Robust Watermarking Approaches

Evaluating these frameworks reveals distinct trade-offs in imperceptible AI watermarks and endurance. RAW prioritizes theoretical guarantees against adversaries, ideal for high-security applications. InvisMark excels in visual fidelity for professional-grade AI art, while SimuFreeMark’s low-frequency focus simplifies integration without performance hits. JIGMARK’s contrastive strategy uniquely arms against diffusion editors, a growing threat in creative pipelines.

Comparison of Robust Watermark Frameworks for Synthetic Images

| Framework | Key Features | Robustness Highlights | Reference |

|---|---|---|---|

| RAW | Provable anti-removal guarantees, joint training of embedding and classifier | Adversarial attacks targeting watermark removal | [arXiv:2403.18774](https://arxiv.org/abs/2403.18774) |

| InvisMark | High-resolution AI-generated images, advanced neural networks for imperceptibility | >97% bit accuracy after cropping, compression, and edits | [arXiv:2411.07795](https://arxiv.org/abs/2411.07795) |

| SimuFreeMark | Low-frequency component embedding, no noise simulation required | Semantic edits and wide range of attacks | [arXiv:2511.11295](https://arxiv.org/abs/2511.11295) |

| JIGMARK | Contrastive learning with diffusion-processed image pairs | Diffusion-model-based editing, preserves image quality | [arXiv:2406.03720](https://arxiv.org/abs/2406.03720) |

| SynthID | Industry-scale pixel-embedded watermark (Google DeepMind) | Cropping, resizing, color adjustments | [DeepMind](https://www.yahoo.com/news/google-made-invisible-watermark-ai-190000294.html) |

SynthID stands out for practicality, surviving everyday tweaks like those in social sharing. Independent tests, however, expose limits: aggressive resizes or compressions erode confidence, underscoring that no method fully conquers all distortions yet. Creators must weigh imperceptibility against survival rates, favoring hybrids tailored to use cases.

Comparison of GenAI Watermarking Techniques: Robustness to AI Editing, Cropping, and Detection

| Technique | Robustness to AI Editing | Robustness to Cropping | Detection Notes |

|---|---|---|---|

| RAW Framework | Provable guarantees against adversarial attacks | N/A | Improved detection under attacks |

| InvisMark | State-of-the-art across manipulations | High (>97% bit accuracy) | 97%+ bit accuracy post-edits ✅ |

| SimuFreeMark | Robust to semantic edits (low-frequency) | High (inherent stability) | Simplified robust detection |

| JIGMARK | High resilience to diffusion-model edits | N/A | Preserves quality & detectability |

| SynthID (Google DeepMind) | Survives common edits like color adjustments | Survives cropping & resizing | Imperceptible but detectable post-edits |

Workshops like those from AI for Good emphasize standards harmonization, pushing watermark interoperability across platforms. This matters for synthetic image watermark survival, ensuring detectors from one ecosystem recognize another’s marks. GitHub curations like Awesome-GenAI-Watermarking compile these evolutions, offering developers a roadmap amid rapid iteration.

Practical Deployment and Royalty Integration

Deploying robust watermarks demands workflow scrutiny. Start with baseline testing: embed, crop 25% edges, compress to web standards, then edit semantically. Metrics like bit error rate under 3% signal viability. Tools from Imatag scale invisible verification, proving provenance at volume without preprocessing hassles.

Layering royalty rails AI content protection transforms defense into revenue. AI Watermark Hub exemplifies this, linking imperceptible embeds to blockchain-ledgered tracks. A viral synthetic portrait, cropped and restyled, still pings origin on scan, auto-distributing micro-royalties to the generator. This closes the loop for creators, deterring theft while incentivizing ethical sharing.

Challenges persist, particularly semantic manipulations mimicking human intent. NIH-backed detectors probe deep watermarks adversarially, revealing passive forensics’ edge in unaltered scans. Yet, as Emergent Mind notes, watermark signals attest origin irrefutably when fused with metadata.

Forward momentum builds through collaboration. Surveys on arXiv dissect embedding dimensions, from frequency domains to neural decoders, forecasting hybrid paradigms. Pair these with policy nudges for mandatory watermarking in high-risk media. For now, cautious adopters gain the edge: fortified authenticity sustains trust, while royalties reward innovation. In generative floods, resilient watermarks anchor reality.