In an era where AI-generated speech can mimic anyone's voice with eerie precision, the threat of deepfake audio looms large. From fraudulent calls scamming millions to manipulated political speeches sowing discord, synthetic audio detection has become a frontline defense. Invisible audio watermarks offer a methodical solution: imperceptible markers embedded in sound waves that specialized detectors can uncover, enabling deepfake audio prevention without altering what listeners hear.

These invisible AI audio markers work by weaving unique digital signatures into the audio's frequency spectrum. Unlike visible stamps on images, they survive compression, noise addition, and even some adversarial attacks, making them a conservative choice for protecting authenticity. As generative AI proliferates, platforms like AI Watermark Hub integrate such tools with royalty rails for AI audio, ensuring creators track and monetize content securely.

Core Mechanics of Audio Watermarking

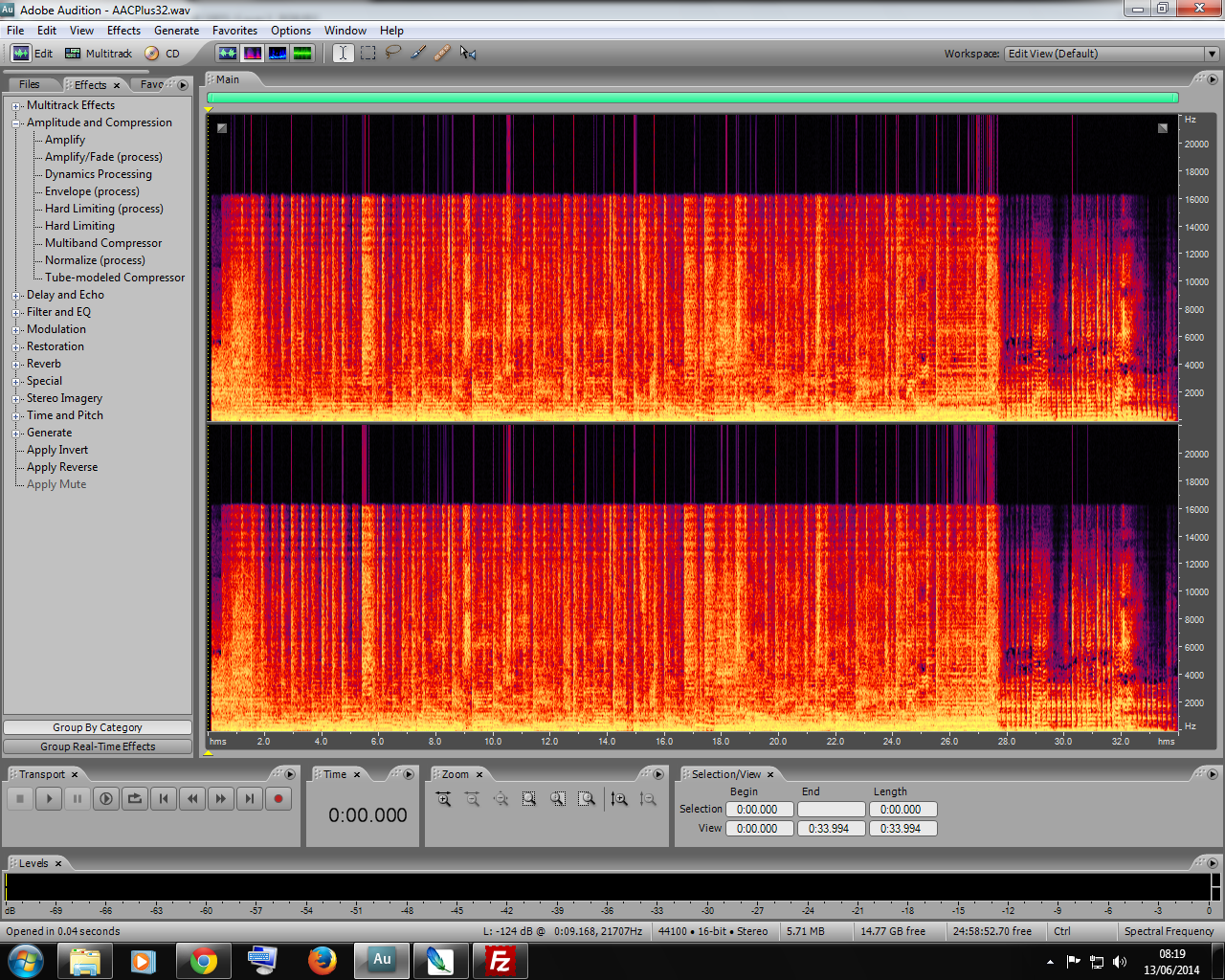

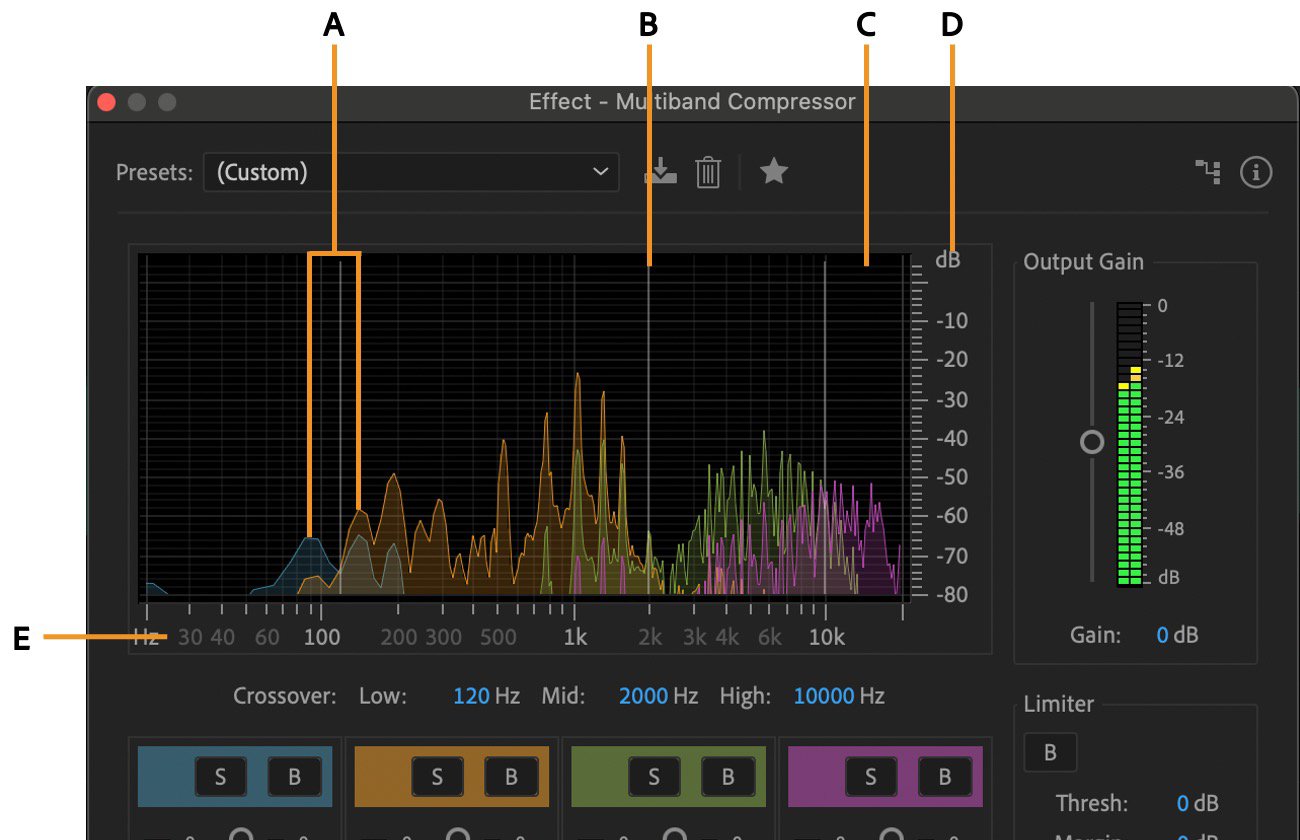

At their essence, invisible audio watermarks exploit the human auditory system's limits. Our ears perceive sound up to about 20 kHz, but watermarks often hide in ultrasonic ranges or modulate least significant bits in the digital signal. This approach, rooted in digital signal processing, ensures the watermark remains inaudible yet extractable.

Consider spread-spectrum techniques, a staple in robust watermarking. Here, the signature spreads across the entire audio bandwidth, akin to noise but patterned for detection. Detectors correlate incoming audio against known keys, yielding high confidence in authenticity. This methodical embedding withstands re-encoding or filtering, outperforming forensic methods like spectral analysis that falter on high-fidelity fakes.

Benefits of Invisible Audio Watermarks

- Robustness to Edits: Watermarks like those from Watermarked.ai and Resemble AI's PerTh endure manipulations such as compression and noise addition.

- Easy Verification: Specialized software detects imperceptible signals, as in Steg.AI and Meta Seal, enabling quick authenticity checks.

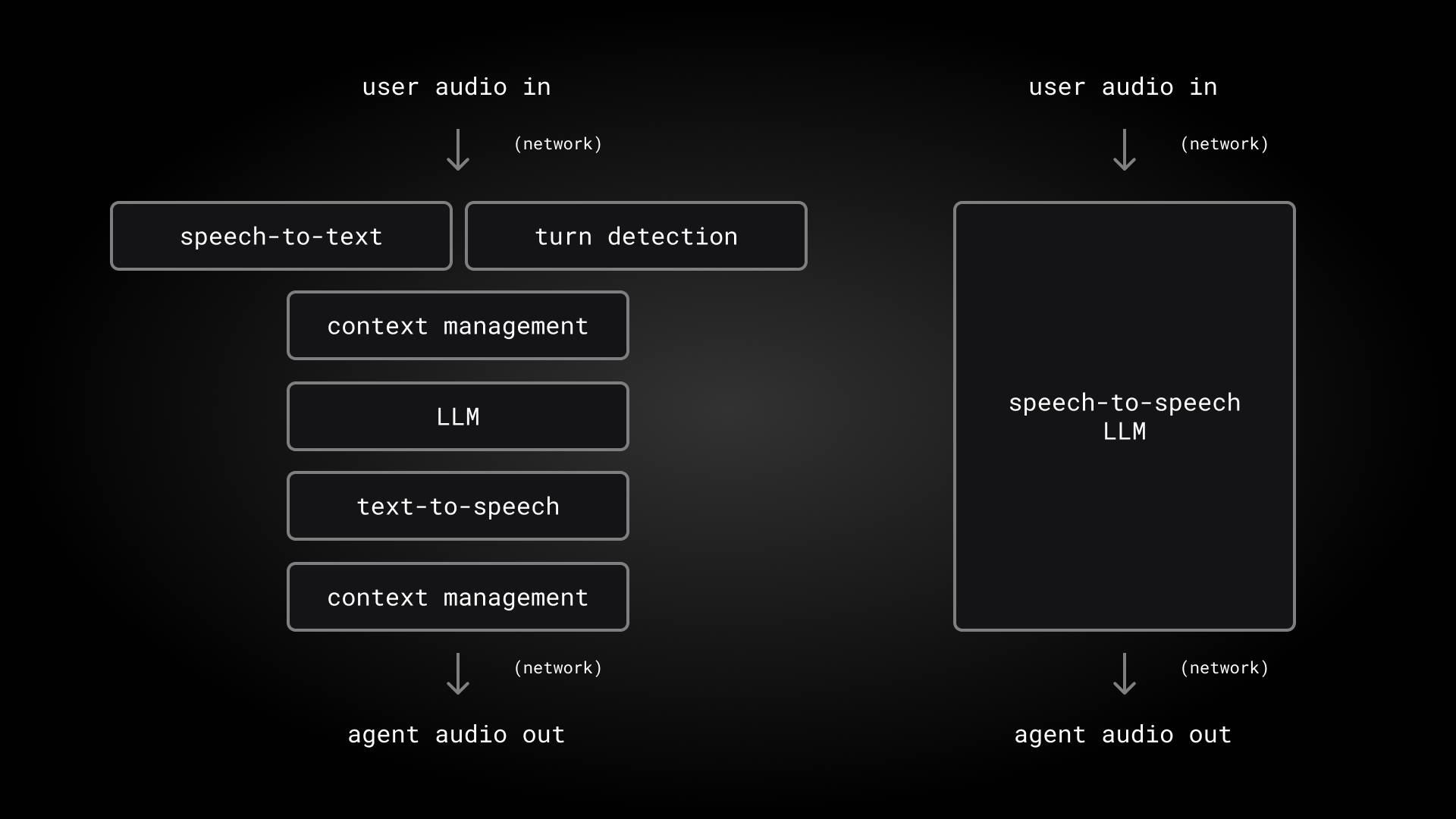

- Integration with AI Audio Pipelines: Embeddable post-generation, e.g., Meta Seal framework supports seamless workflow integration for AI-generated speech.

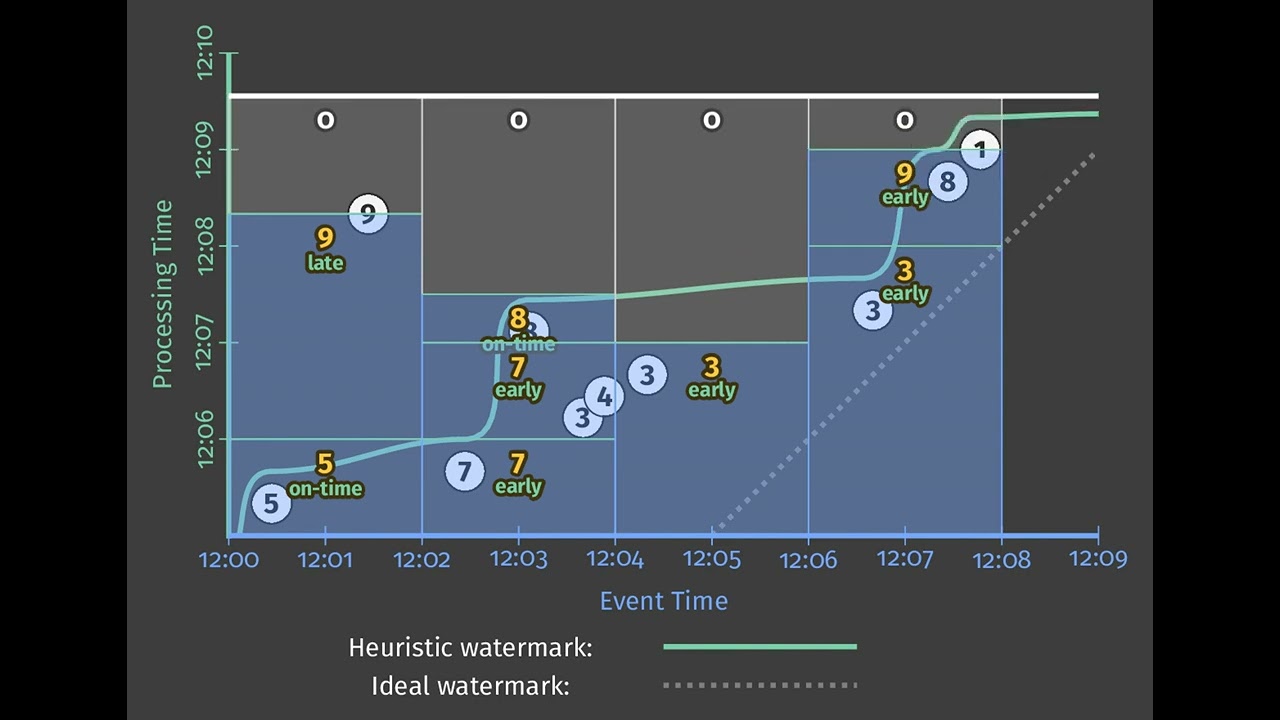

- Scalability for Real-Time Detection: Designed for efficient processing, supporting real-time deepfake identification in platforms like Resemble AI.

- Minimal Quality Impact: Imperceptible to listeners, preserving audio fidelity as confirmed in Resemble AI's PerTh and Watermarked.ai implementations.

Yet, effectiveness hinges on design. Fragile watermarks shatter under edits, signaling tampering; robust ones persist, ideal for provenance tracking. Balancing these traits demands conservative engineering, prioritizing capital protection for audio assets over flashy novelty.

Trailblazing Tools Shaping Synthetic Audio Detection

Recent developments underscore watermarking's maturity. Watermarked. ai pioneers undetectable embeds that poison AI training data while flagging deepfakes, robust against manipulations like speed changes or reverb. Steg. AI leverages deep learning for audio stamps, verifying origins post-alteration without quality loss.

Resemble AI's PerTh Watermarker stands out, injecting sonic signatures into generated speech that endure common edits. Meanwhile, Meta's open-source Seal framework extends watermarking to audio, embedding post-generation for broad applicability. These tools align with standards like C2PA, fostering interoperability in multimedia authenticity.

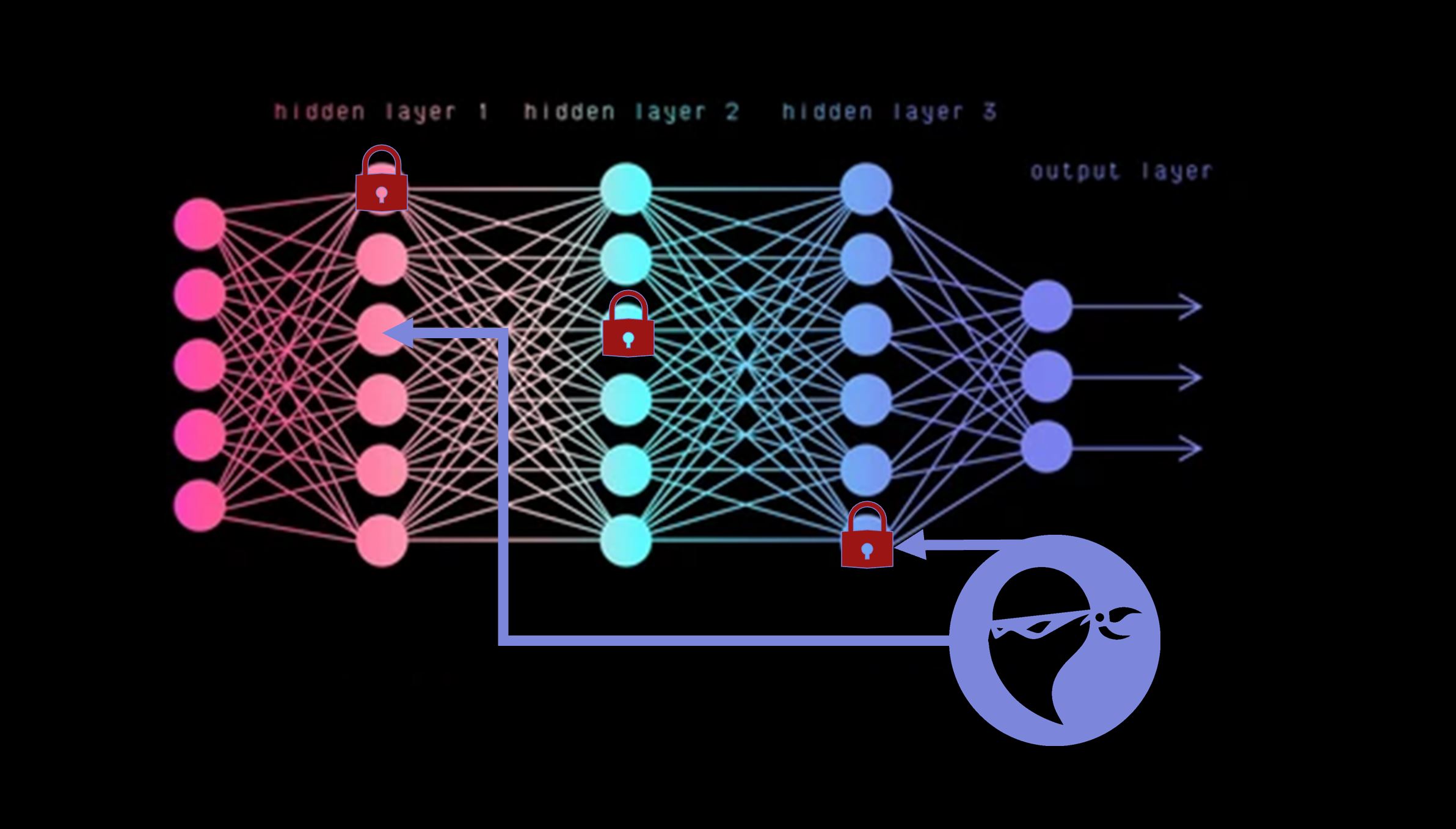

Academic advances bolster industry efforts. FaceSigns employs semi-fragile neural methods, embedding messages fragile to fakes but tolerant of benign processing. FakeMark innovates by pairing injected watermarks with deepfake artifacts, enhancing attribution for synthetic audio detection.

Navigating Robustness in Adversarial Environments

Conservative deployment acknowledges vulnerabilities. While watermarks resist casual edits, sophisticated actors might strip them via generative inpainting, as seen in image domains. Tools omitting markers exacerbate risks, underscoring the need for universal adoption.

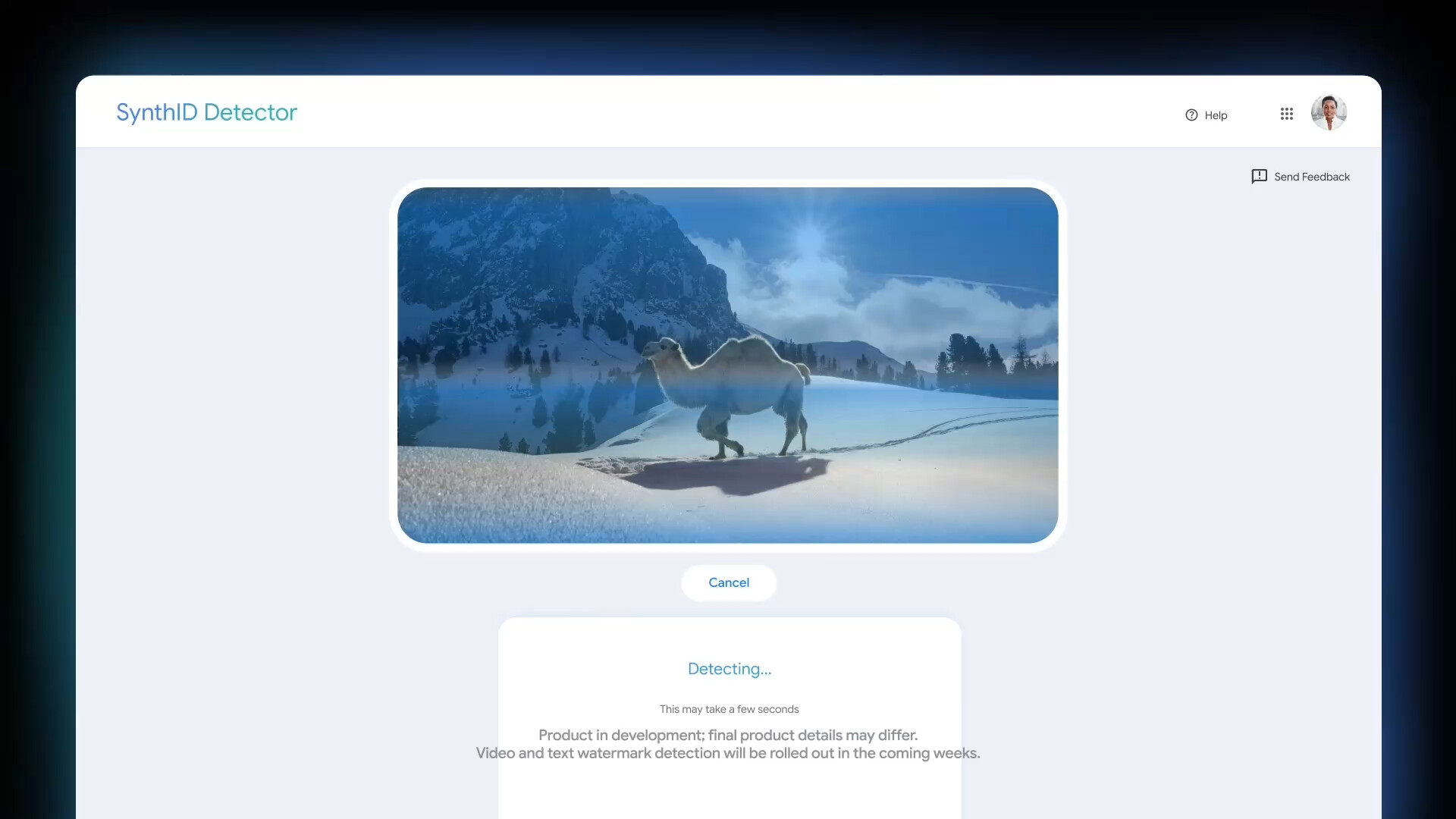

Still, layered defenses prevail: combine watermarks with blockchain-ledgered royalty rails AI audio for tamper-proof provenance. Pindrop's insights affirm watermarking's role in distinguishing live from synthetic speech, vital as deepfake calls surge. Google's SynthID evolves toward audio, promising cross-modal detection.

To fortify these systems against evolving threats, conservative strategies emphasize multi-layered protection. Pairing audio watermarking deepfakes with behavioral biometrics and chain-of-custody logs creates resilient barriers. Platforms such as AI Watermark Hub exemplify this by fusing invisible markers with royalty rails for AI audio, safeguarding content from inception to distribution while enabling seamless monetization.

Overcoming Persistent Hurdles

Deployment reveals friction points that demand measured responses. Adversaries wielding advanced generative models can excise watermarks, mirroring exploits in visual domains where AI inpainting erases signatures. Open-source generators bypassing embeds compound the issue, as do compression artifacts in streaming pipelines that dilute signals. Pindrop underscores this duality: while watermarking excels in controlled settings, real-world telephony mangles audio, testing robustness limits.

Regulatory gaps further complicate adoption. Absent mandates, voluntary standards like C2PA falter against non-compliant actors. Brookings analyses highlight watermarking's policy shortcomings; detection alone fails without enforcement teeth. A conservative stance prioritizes hybrid vigilance: watermarks as sentinels, not saviors, backed by forensic backups and user education.

Balancing fragility and endurance proves pivotal. Semi-fragile designs, as in FaceSigns research, detect manipulations by design; they degrade predictably under forgery yet tolerate noise. FakeMark elevates this by fusing extrinsic marks with intrinsic flaws, attributing fakes to specific generators. Such innovations signal maturity, yet require rigorous validation across diverse accents and environments.

Practical Integration for Creators and Enterprises

For content originators, embedding invisible AI audio markers streamlines workflows without overhead. AI Watermark Hub's suite automates injection during generation, verifies on playback, and triggers royalty rails AI audio for usage tracking. Podcasters tag episodes preemptively; enterprises audit calls for authenticity. This closed loop minimizes disputes, conserving resources amid proliferation.

Scalability shines in high-volume scenarios. Real-time detectors process streams with sub-second latency, fitting teleconferencing or broadcast. Resemble AI's PerTh exemplifies endurance, surviving pitch shifts and echoes common in voiceovers. Steg. AI's neural embeds adapt to payloads, encoding metadata like creator IDs for granular provenance.

Steps to Implement Audio Watermarks

- 1. Generate watermarked audio: Use real tools like Watermarked.ai or Resemble AI's PerTh Watermarker to embed imperceptible signatures during synthesis.

- 2. Test robustness via edits: Apply manipulations like compression, noise addition, and re-encoding; verify detection with the tool's decoder, as watermarks from Steg.AI remain robust.

- 3. Integrate detection in pipeline: Embed Meta Seal decoder into verification workflows for real-time authenticity checks.

- 4. Enable monetization tracking: Link watermark provenance to content platforms for usage monitoring and royalty attribution, preventing unauthorized AI reuse.

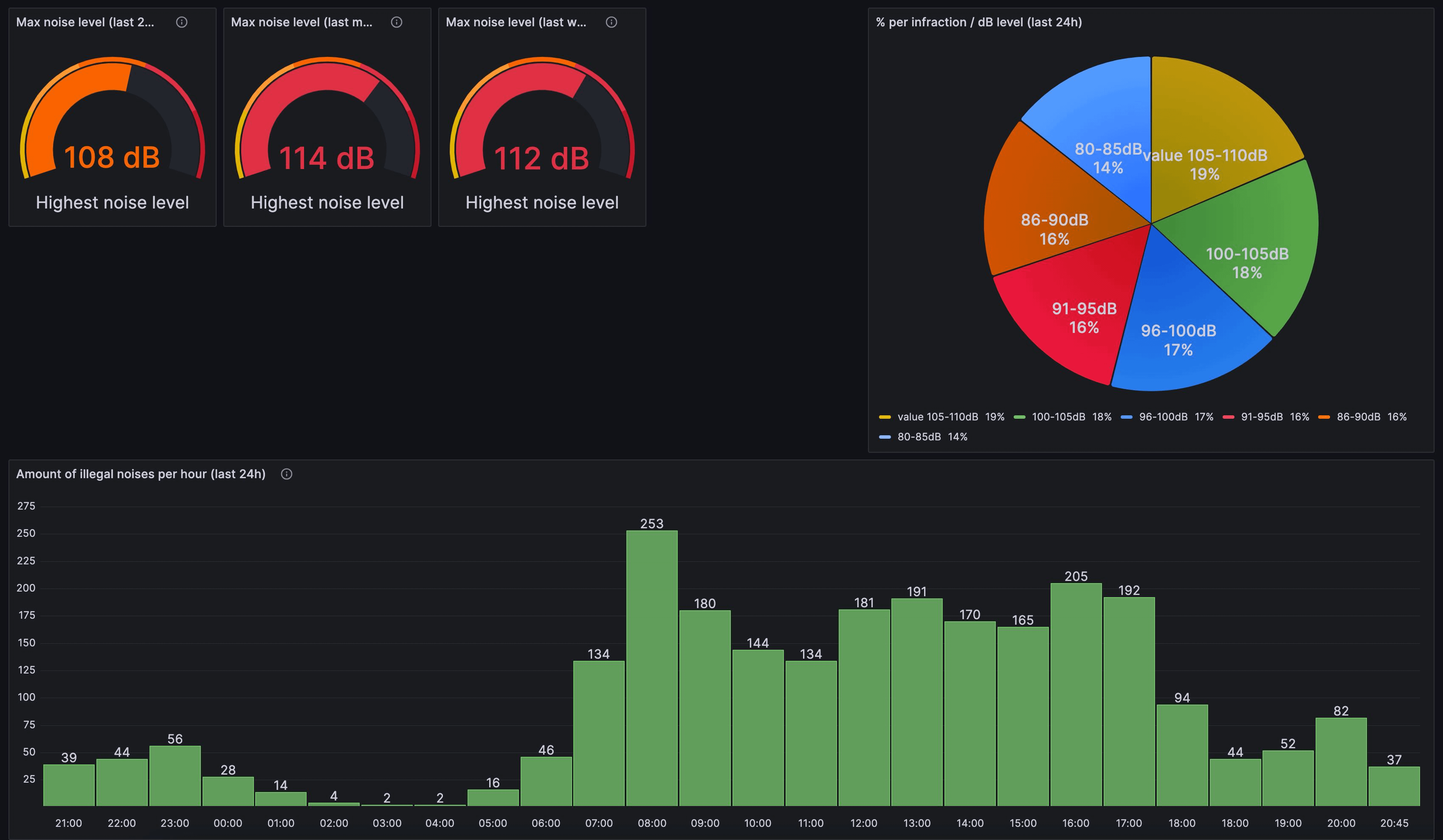

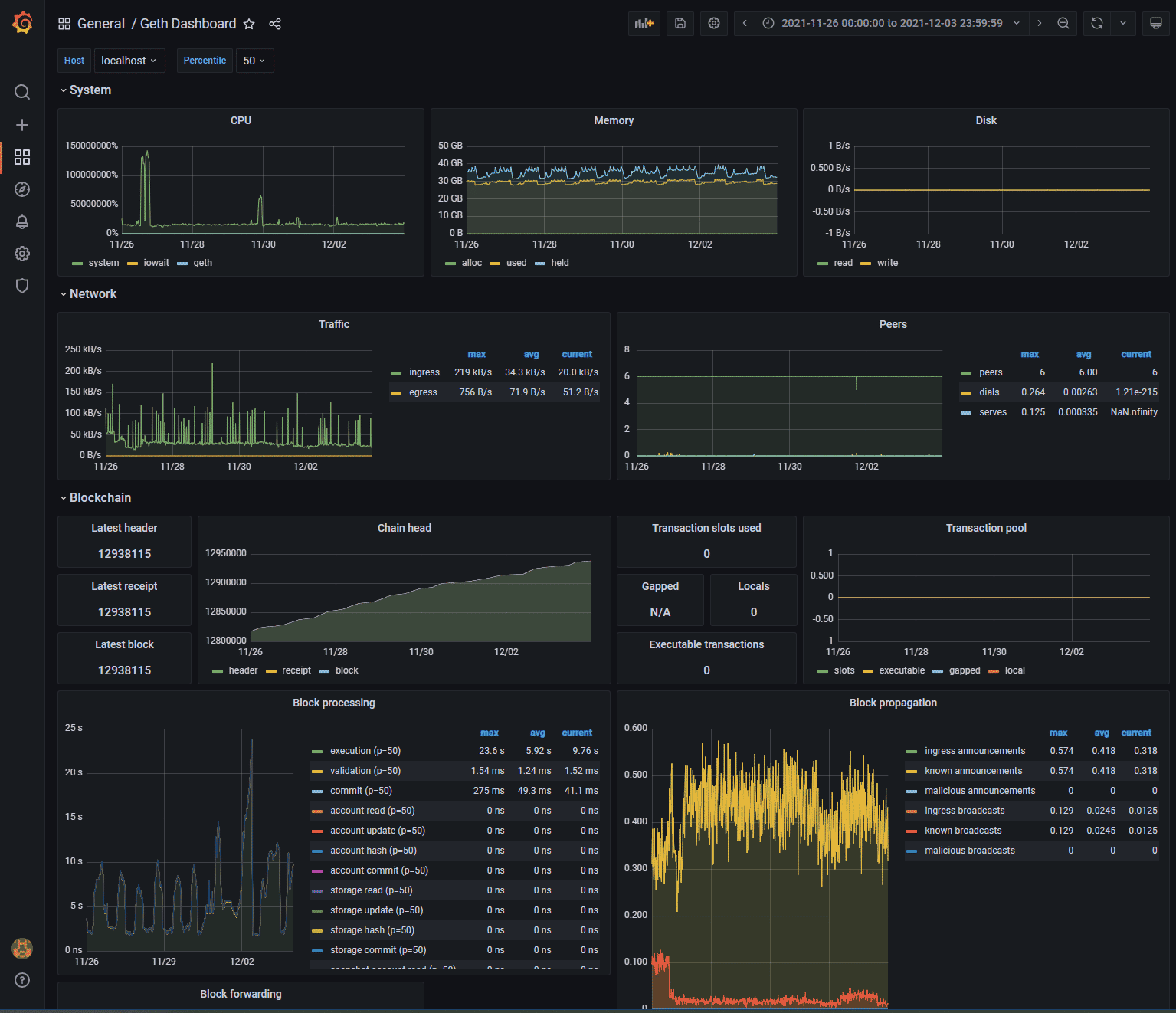

- 5. Monitor via analytics dashboard: Track detections, tampering attempts, and usage stats on platforms like Watermarked.ai or Steg.AI dashboards.

Enterprises benefit from API-driven ecosystems. Watermarked. ai disrupts unauthorized training by tainting datasets, a preemptive strike on derivative deepfakes. Meta Seal's open toolkit invites customization, fostering community-driven refinements. These convergences position watermarking as infrastructure, not afterthought.

Charting a Secure Trajectory

Prospects brighten with concerted momentum. SynthID's audio extensions promise universal fingerprints, while workshops like AI for Good standardize protocols. Yet prudence dictates skepticism toward panaceas; layered architectures, blending watermarks, artifacts, and ledgers, fortify best. As deepfake audio prevention matures, it shields discourse integrity without stifling creativity.

Creators wielding these tools protect legacies methodically: embed signatures conservatively, verify rigorously, monetize judiciously. In synthetic audio detection's arena, resilience trumps novelty, ensuring genuine voices resonate unmolested. AI Watermark Hub leads this charge, harmonizing protection with prosperity in generative frontiers.

No comments yet. Be the first to share your thoughts!