In an era where video calls bridge continents in seconds, the shadow of real-time deepfakes looms large, turning trusted conversations into potential minefields. Imagine receiving a frantic call from what appears to be a family member in distress, only to realize later it was a scammer wielding AI-forged video. These synthetic media video call scams exploit the seamlessness of platforms like Zoom and Teams, eroding trust at its core. As generative AI advances, so does the urgency for proactive defenses like real-time deepfake watermarking, which embeds invisible markers during live streams to verify authenticity instantaneously.

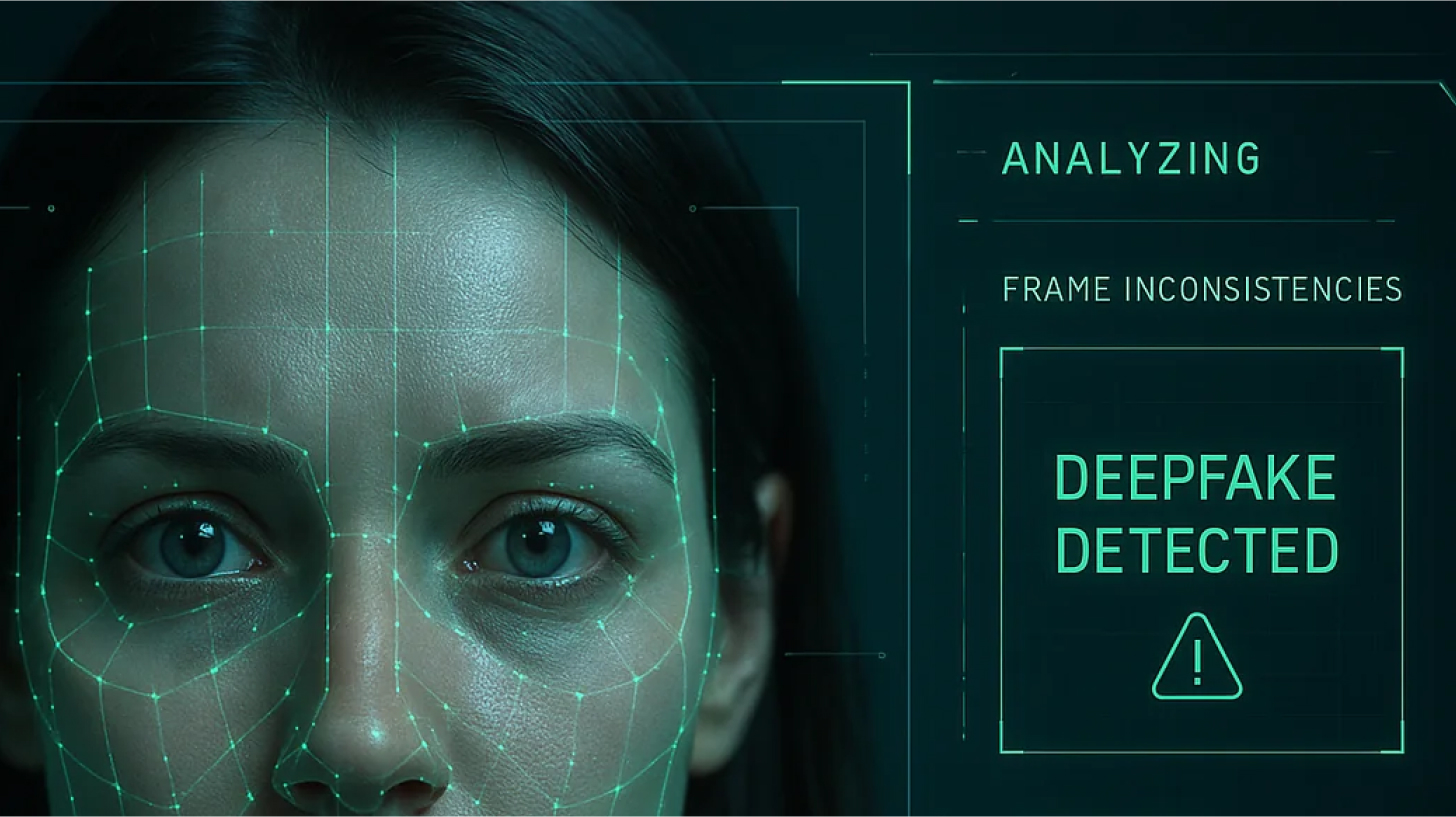

The Subtle Art of Spotting Deepfake Intrusions

Traditional safeguards falter against live deepfakes because they mimic human nuances too convincingly. Cybersecurity insights reveal that many real-time models falter on profile views; a simple request to turn sideways often exposes distortions, as frontal-trained algorithms struggle with angles. Yet relying on such manual checks feels archaic in high-stakes scenarios like executive negotiations or family emergencies. Sources from Adaptive Security and Fortune emphasize unexpected actions, like reciting safe words or multi-factor identity probes, but these are reactive bandaids on a hemorrhaging wound.

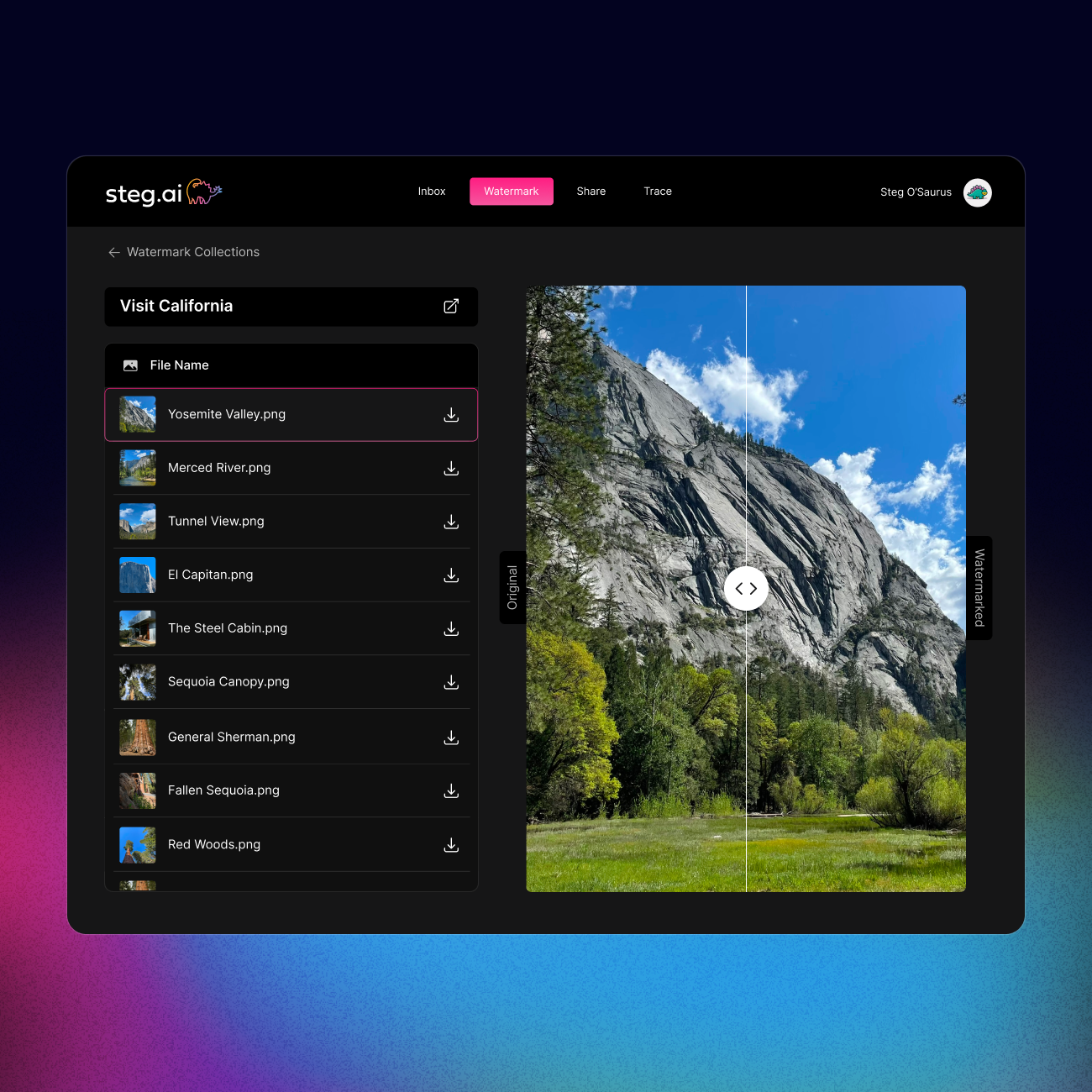

Deepfake fraud surges unabated, with impersonations of trusted figures fueling financial scams and emotional manipulation. Prompt reporting helps, yet prevention demands embedding authenticity from the outset. Watermarking images online discourages repurposing, per WREX tips, but for live video calls, dynamic solutions are paramount. The Reddit discourse on carrier protections underscores network-level hurdles for voice deepfakes, hinting at why video demands tailored armor.

Live Watermark Embedding: A Fortress in the Stream

At its essence, live watermark embedding injects cryptographic signatures into video feeds mid-transmission, imperceptible to the eye yet robust against tampering. Unlike static watermarks, these adapt in real-time, surviving compression and minor edits while shattering under deepfake alterations. This semi-fragile design, echoed in academic works like FaceSigns, authenticates media by verifying embedded secrets, robust to benign tweaks but fragile to manipulations.

I view this as a paradigm shift; it's not mere detection but proactive provenance. Platforms now integrate such tech seamlessly, analyzing streams for anomalies while enforcing royalties on synthetic content via live AI detection royalties. Resemble AI exemplifies this, monitoring audio, video, and context in meetings to flag synthetics, bolstered by industry-standard markers verifiable on-the-fly.

Watermarked. ai disrupts AI training by poisoning datasets with unremovable marks, extending to live scenarios where detection trumps generation. AuthMark's continuous authentication signs content at birth, weaving multi-modal checks into communication tools for immediate threat neutralization.

Deploying Real-Time Defenses Without Friction

Implementation thrives on lightweight agents; Kidas exemplifies non-intrusive analysis for Zoom and Meet, dissecting streams sans lag. My insight: true resilience balances sophistication with usability, ensuring executives and families adopt without hesitation. Here's where layered strategies shine.

Key Benefits of Live Watermark Embedding

- Instant verification during calls: Cryptographic markers enable real-time authenticity checks in video meetings, as with Resemble AI.

- Tamper-evident markers: Semi-fragile watermarks detect facial manipulations while surviving benign edits, per FaceSigns research on arXiv.

- Royalties for synthetic media creators: Provenance tracking in watermarks supports usage monitoring and compensation, enhancing creator ecosystems amid deepfake proliferation.

- Reduced scam vulnerability: Imperceptible watermarks disrupt deepfake generation and enable proactive detection, as in Watermarked.ai, minimizing fraud risks.

Nextcloud and AML Incubator guides advocate software pairings with behavioral cues, yet watermarking elevates this to automated vigilance. Channel V6's reporting emphasis pairs well with tech that preempts deception, fostering a ecosystem where synthetic media bows to verified reality. As threats evolve, these tools not only detect but deter, reshaping video call security profoundly.

Layered defenses demand clarity on what works best against synthetic media video call scams. Enterprises and individuals alike benefit from platforms that prioritize real-time deepfake watermarking without compromising flow. Consider the tactical edge: watermarking not only flags fakes but enforces provenance chains, crucial as AI blurs personal and professional boundaries.

Benchmarking the Vanguard Solutions

Comparison of Top Real-Time Deepfake Protection Tools

| Platform | Core Tech (watermarking/detection) | Platforms Supported (Zoom/Teams/Meet) | Key Strength |

|---|---|---|---|

| Watermarked.ai | Dataset poisoning watermarks | All major | Unremovable marks |

| Resemble AI | Audio-video-context analysis + watermarking | Zoom/Teams/Meet | Instant verification |

| AuthMark | Continuous signing + multi-modal analysis | Comms tools (Zoom/Teams/Meet) | Real-time verification |

| Kidas | Real-time stream analysis | Zoom/Meet/Teams | Lightweight |

Competitive Landscape of Deepfake Video Call Protection Tools

| Tool | Key Feature | Strengths | Best For |

|---|---|---|---|

| Watermarked.ai | Preemptive poisoning | Renders stolen feeds useless for deepfake training 💀 | Preventing model theft |

| Resemble AI | Contextual scrutiny | Catches nuances beyond pixel analysis 👁️ | Subtle deepfake detection in meetings |

| AuthMark | Continuous signing | Mimics digital notarization, survives transmission 📜 | Secure multi-modal comms |

| Kidas | Seamless integration | Unnoticed in calls, user-friendly 🎯 | Non-tech users vs family scams |

| Hybrid Approach | Behavioral + embedded markers | Maximizes coverage, fixes carrier blind spots 🔗 | Comprehensive video protection |

This evolution demands vigilance, yet optimism prevails. Real-time deepfake watermarking isn't a patch-it's foundational architecture, restoring video calls' sanctity. In uncertain digital tides, embedding truth ensures conversations remain human at heart, scams relegated to footnotes in tech history.

No comments yet. Be the first to share your thoughts!