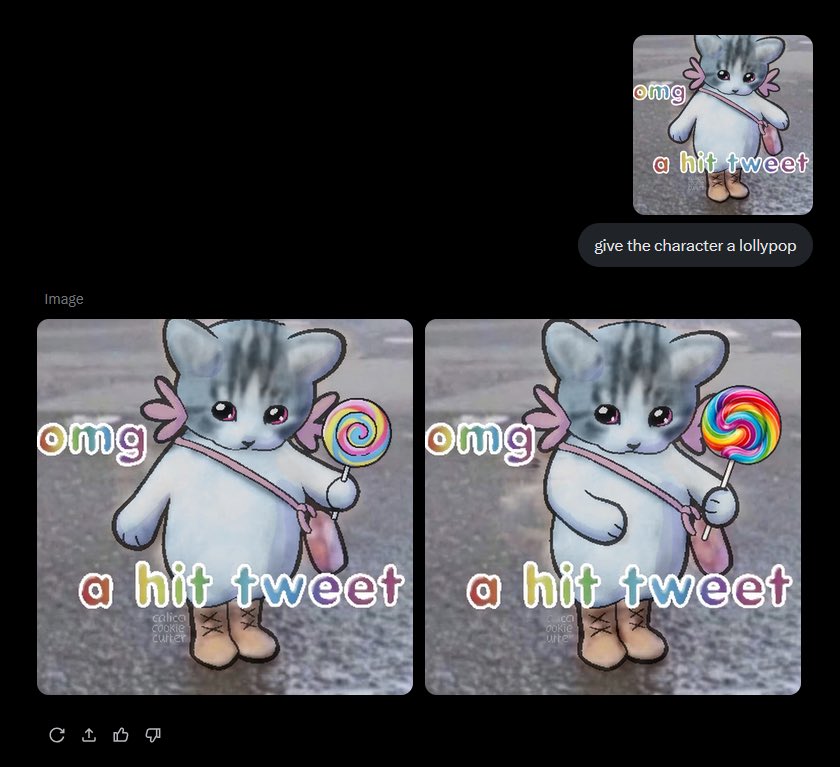

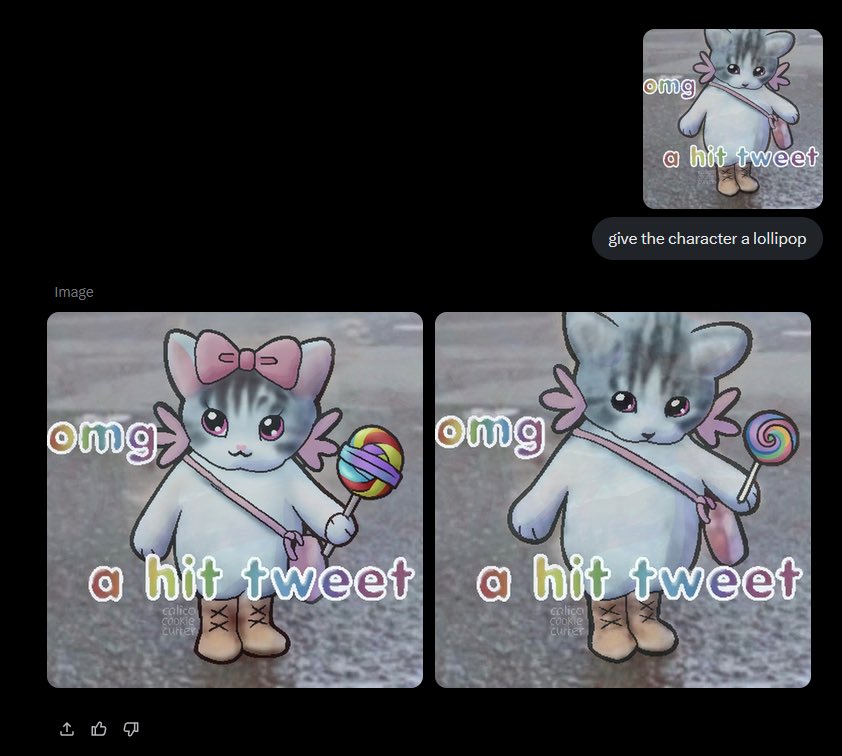

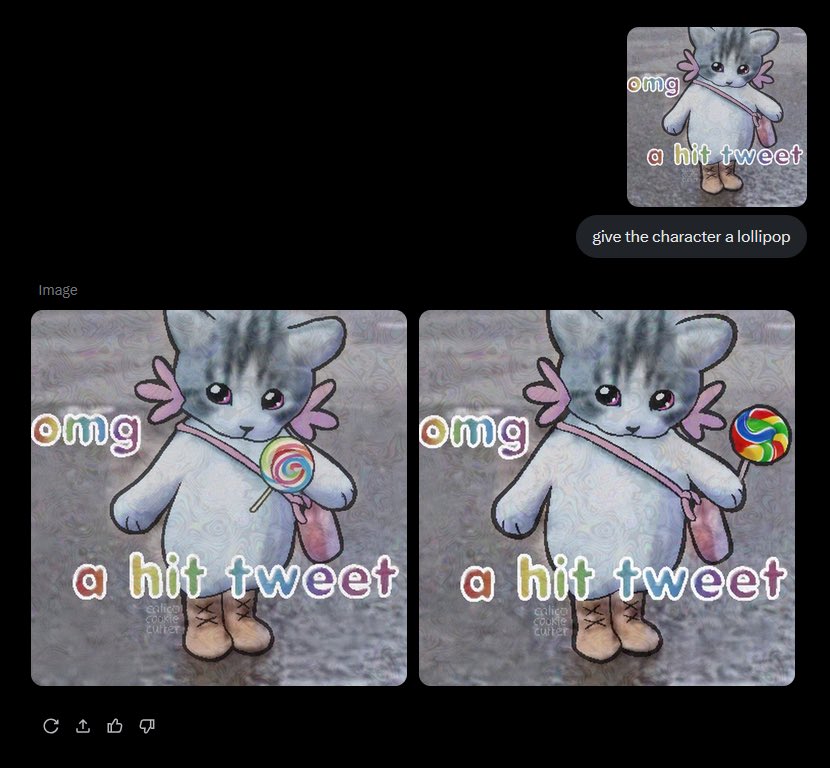

In the shadowed corners of social platforms like Pinterest and Instagram, artists watch helplessly as their meticulously crafted digital artworks vanish into the maw of AI training datasets. One moment, your intricate line work or vibrant color palettes inspire fans; the next, they're fodder for generative models spitting out pale imitations. This isn't hyperbole- it's the stark reality for creators in 2026. AI-resistant invisible watermarks emerge as a bulwark, embedding safeguards so subtle they evade human detection yet thwart machine learning algorithms bent on replication or theft.

Consider the chorus of voices from artists' communities. Posts on Facebook's Artists Against Generative AI group detail manual invisible watermark experiments, while Instagram reels from overlai. app boast year-long refinements to outsmart removal tools. Even Etsy sellers hawk AI-resistant "PROOF" PNG overlays, signaling a grassroots scramble for defense. Yet, as a specialist in synthetic media watermarking, I caution: not all solutions hold up. Many falter under AI's relentless editing prowess, from inpainting to style transfer. True protection demands watermarks engineered to survive these assaults, preserving your style's uniqueness amid the generative AI surge.

The Imperative for Robust Watermarking in Synthetic Media

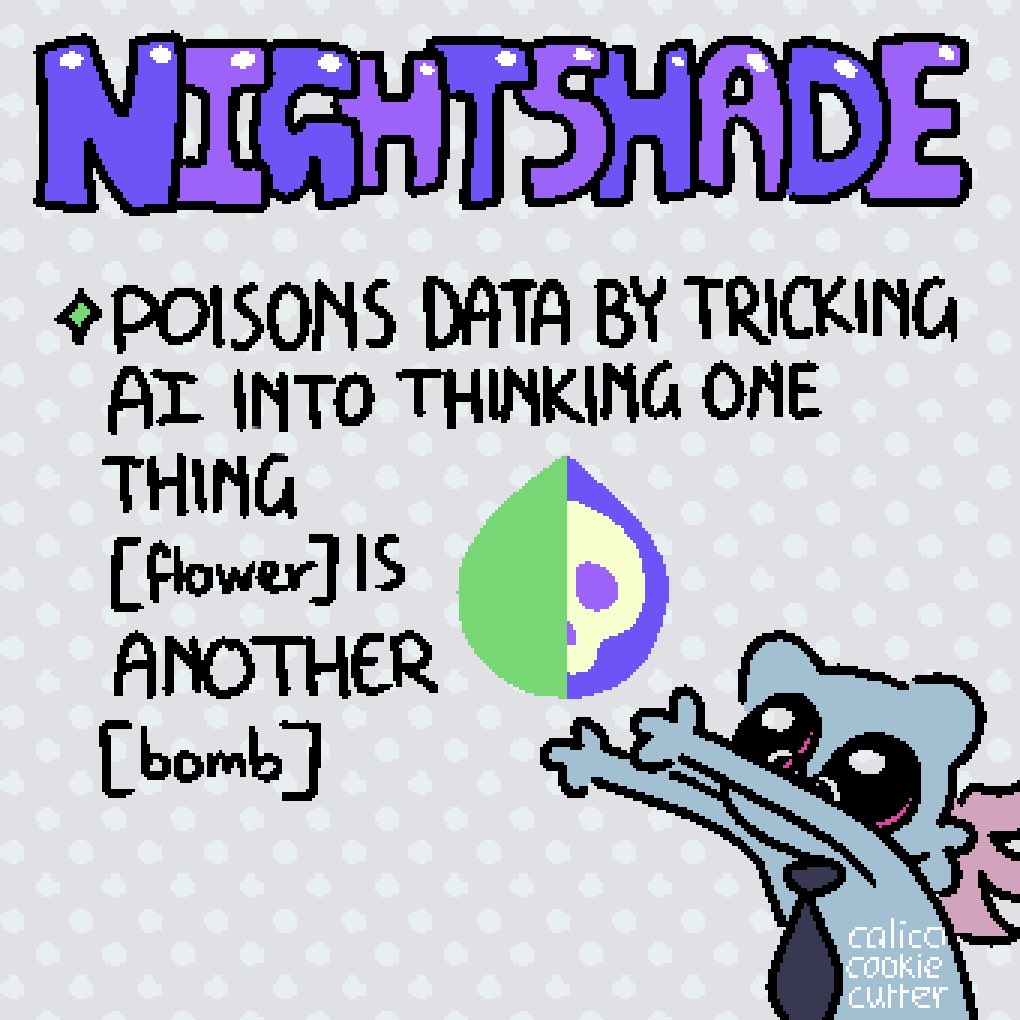

Artists face a dual peril: outright theft via scraping and insidious unauthorized modifications where AI mangles originals into derivatives. Pinterest, once a haven for inspiration, now filters AI slop imperfectly, as tutorials from creators like Grant Abbitt reveal. Platforms like ArtStation offer opt-outs, but they're reactive bandaids. Proactive armor lies in invisible watermarks that survive AI editing. These aren't crude overlays; they're frequency-based perturbations, like the Discrete Wavelet Transform (DWT) method highlighted in 2024 guides, which scatter data across image scales to resist cropping or compression.

Watermarking in images will not solve AI-generated content issues alone, but some invisible variants endure basic alterations.

From my vantage at AI Watermark Hub, where we champion royalty rails for synthetic media, I've seen flimsy watermarks dissolve under AI scrutiny. Robust ones, however, integrate seamlessly with detection pipelines, enabling traceability even post-manipulation. This isn't mere tech hype- it's essential for protecting art from AI theft on Pinterest and beyond, ensuring your portfolio remains yours.

Unmasking Vulnerabilities in Conventional Protections

Traditional visible watermarks scream "do not steal, " yet invite removal via simple Photoshop clones or AI erasers. Commands like "Hey Google, remove that pesky watermark" underscore the folly, as Instagram demos from overlai. app illustrate. Invisible manual efforts, praised in artist forums, buckle too- AI models trained on vast datasets learn to excise them effortlessly. Enter the new frontier: artist watermark removal prevention through adversarial techniques.

YouTube channels like Satori Graphics warn of 2026 headaches for graphic designers, where control slips away without proof mechanisms. Etsy bundles with organic noise structures aim to counter this, blending chaos into art so AI stumbles. But skepticism tempers enthusiasm; I've analyzed countless schemes, and only those disrupting model inference at core levels- think style cloaking- deliver. Platforms urging artists to ditch Pinterest for niche tools reflect this shift, prioritizing sanctity over virality.

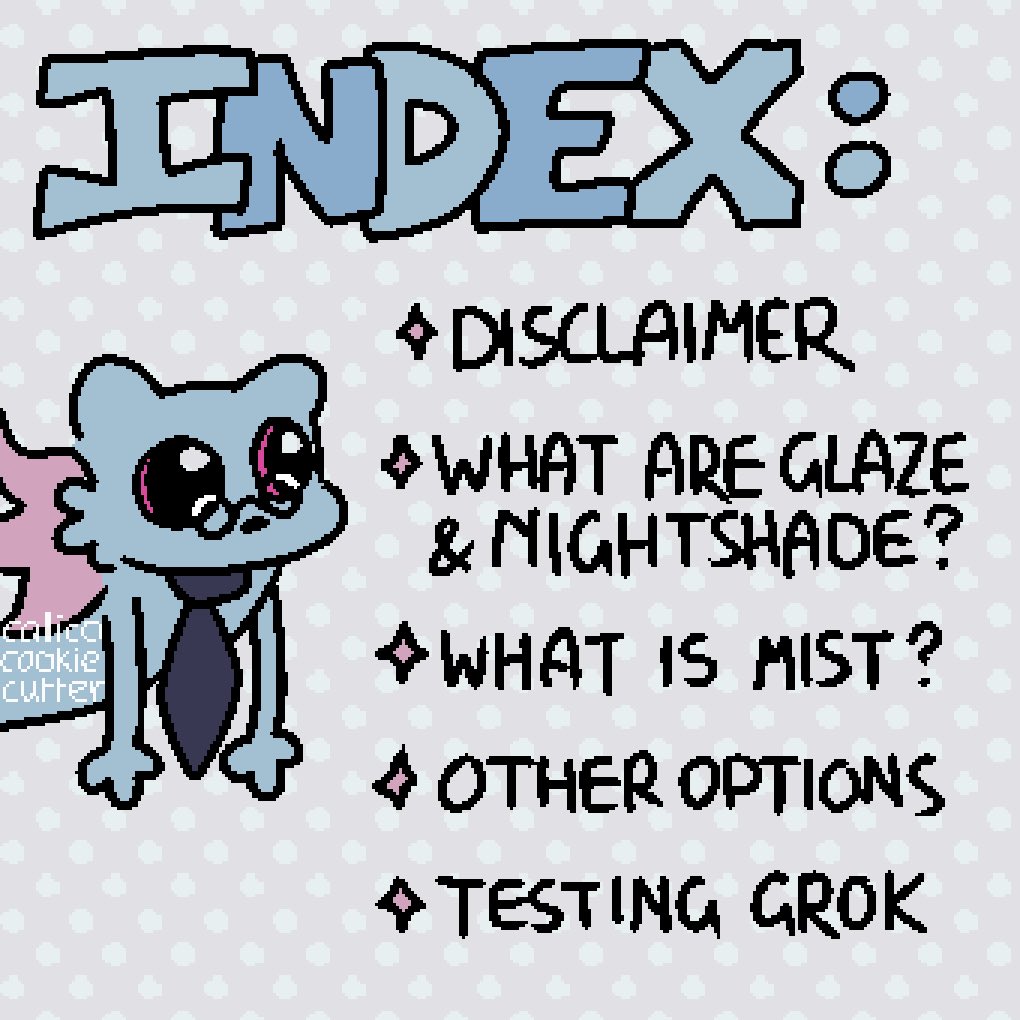

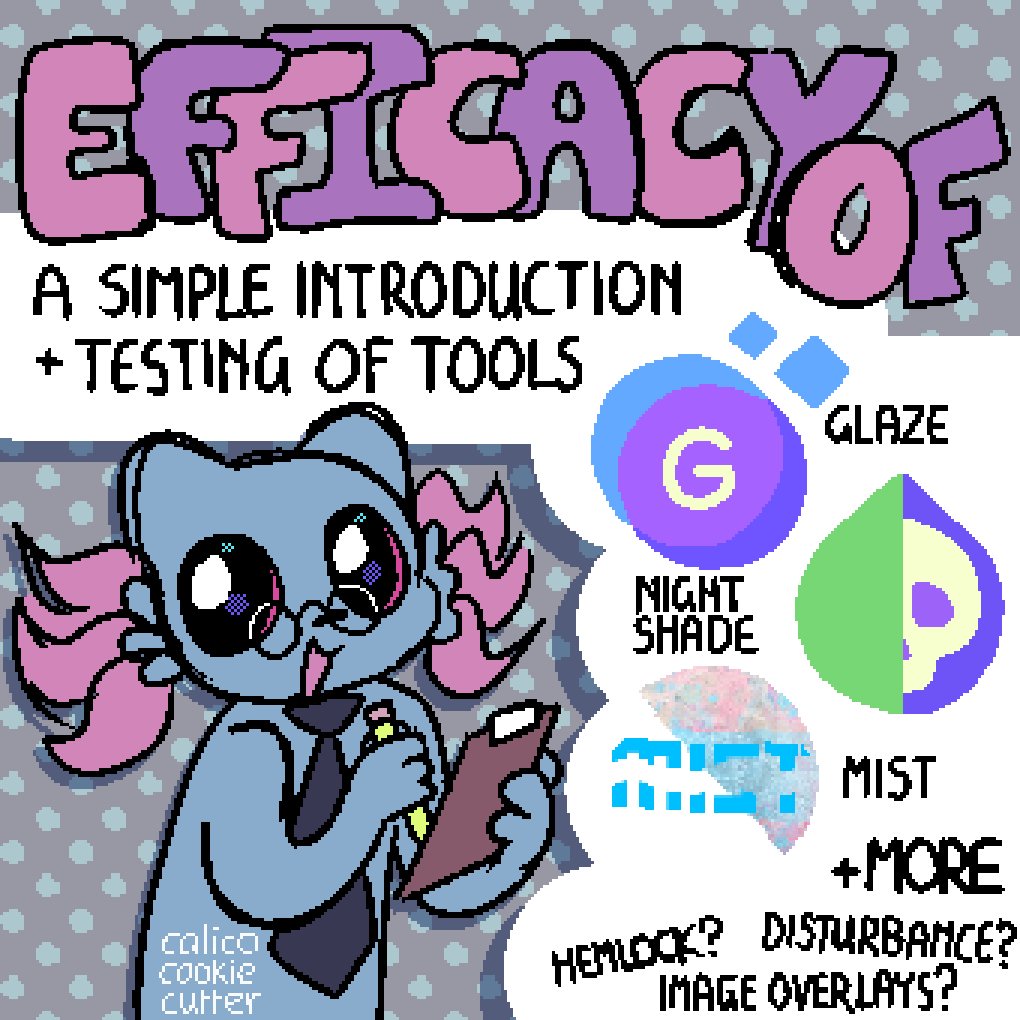

Key AI-Resistant Watermark Tools

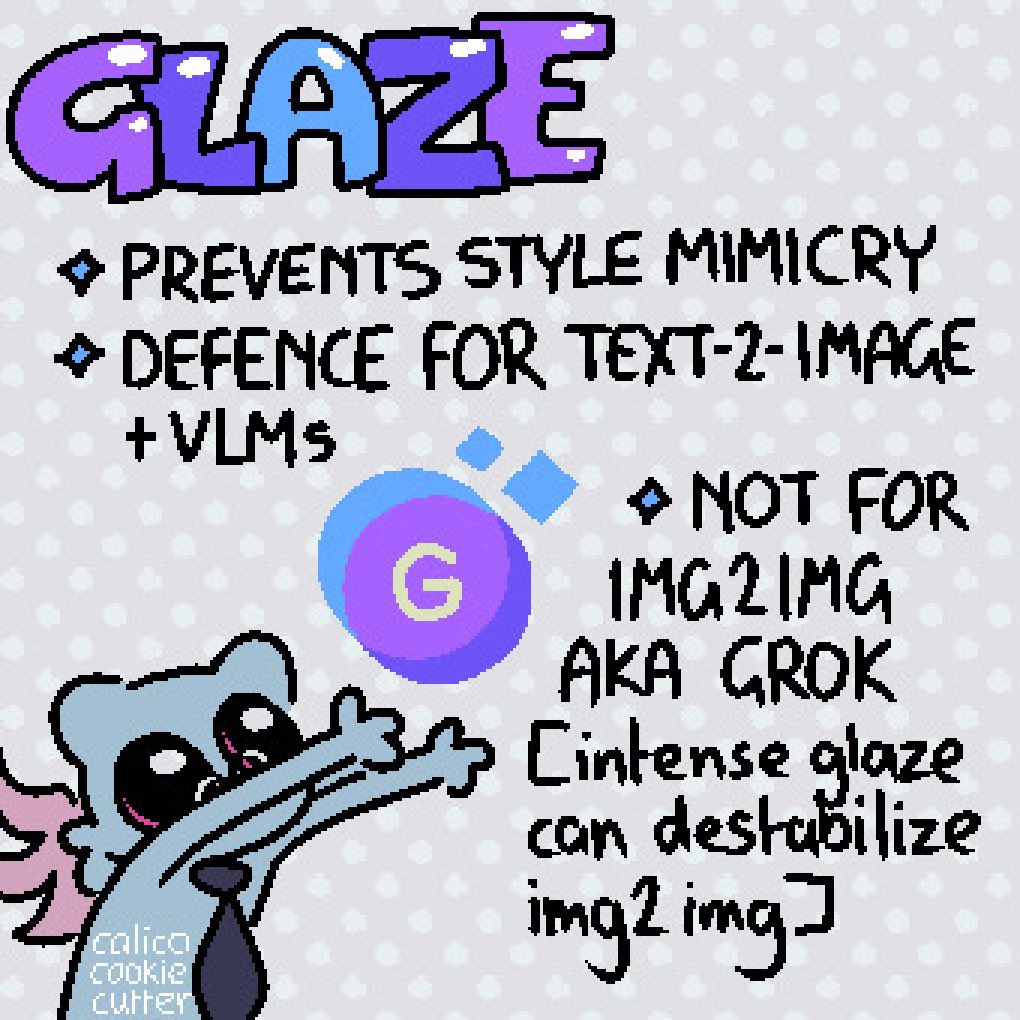

- Glaze: University of Chicago tool adds invisible 'style cloaks' to artworks, disrupting AI style replication while imperceptible to humans. Details

- Watermark Ninja: Embeds advanced invisible marks into images for traceability, resisting AI-driven removal attempts. Site

- ArtyShield.ai: Applies imperceptible protective noise to disrupt AI analysis without altering visual quality. Site

- AI-Resistant Watermark Bundle: Organic noise-based designs from Wander Wit Treasures that evade AI removal tools. Shop

- ArtHelper.ai: Online tool for adding protective watermarks to safeguard artwork from unauthorized AI use. Tool

Pioneering Tools Fortifying Artistic Defenses

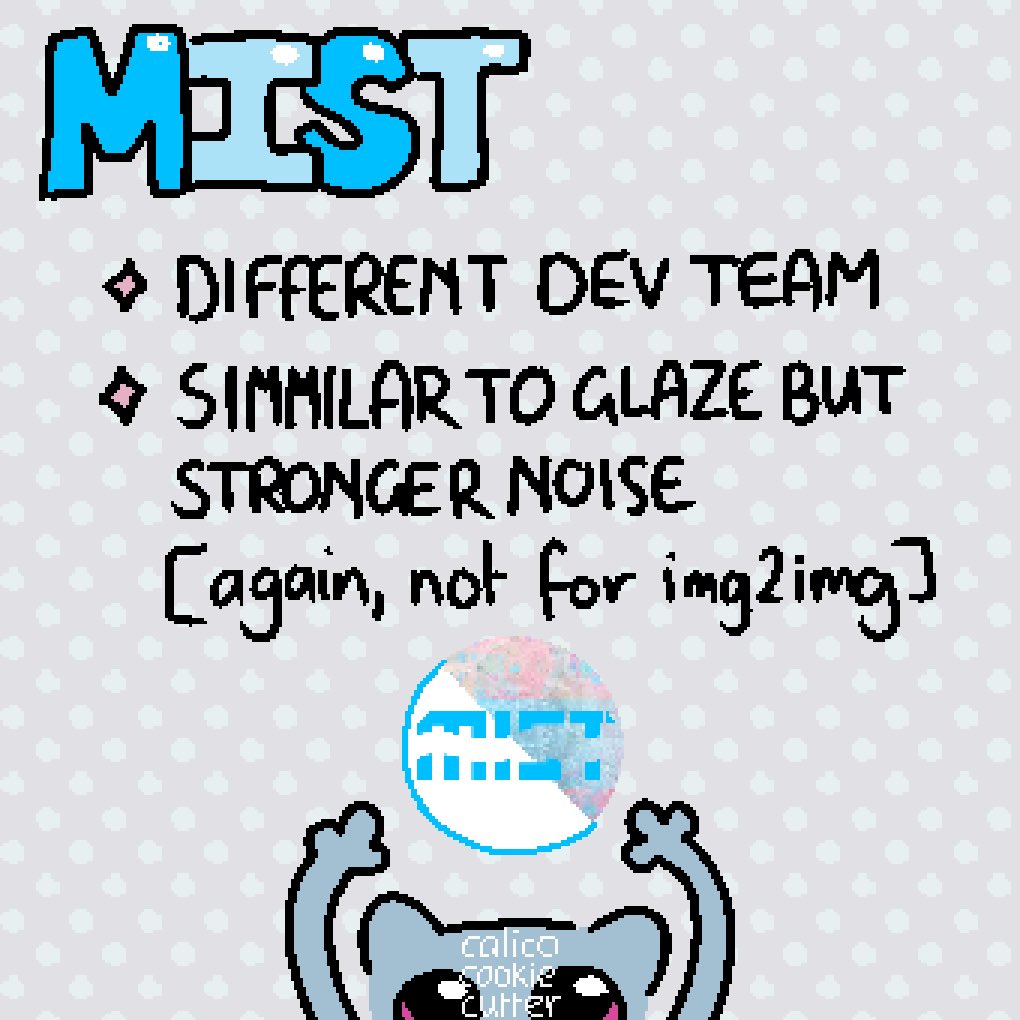

Leading the charge is Glaze from University of Chicago researchers, cloaking styles invisibly to baffle AI replication. Imperceptible to eyes, it mangles training signals, a godsend for digital painters. Watermark Ninja embeds traceable markers resilient to edits, while ArtyShield. ai injects protective noise without aesthetic scars. These align with advanced proposals like Locally Adaptive Adversarial Color Attack (LAACA), frequency-tuned to degrade AI outputs selectively.

Photographers turn to Lisa Poseley's Photoshop actions, overlaying patterns that foil automation. ArtHelper. ai streamlines online application, and Wander Wit Treasures' bundles weaponize noise against removers. As an authority in this domain, I opine these tools, when layered with platform opt-outs on DeviantArt, form a formidable shield. Yet, vigilance persists; AI evolves, demanding watermarks that adapt dynamically for enduring robust watermarking in synthetic media.

Artists, reclaim agency. These technologies don't just protect- they affirm your irreplaceable human spark amid machine mimicry.

Layering these tools demands strategy, not blind faith. Start with Glaze for style protection during creation, then reinforce with Watermark Ninja for traceability. Test rigorously: crop, upscale, run through inpainting demos. Only then share on Pinterest, where protect art from AI theft remains paramount despite filters. From my experience at AI Watermark Hub, hybrid approaches- combining adversarial noise and blockchain-backed royalty rails- yield the stoutest defenses, tracking derivatives across the web.

Mastering Application: Hands-On Tactics for Artists

Practicality separates hype from heroism. Glaze installs swiftly via browser extension, processing batches invisibly. Artists report zero visual impact, yet Midjourney clones veer wildly off-style. ArtyShield. ai's dashboard scans uploads for vulnerabilities, injecting tailored perturbations. For Photoshop loyalists, Lisa Poseley's action scripts automate pattern overlays, blending organic chaos that AI removal tools misread as texture. Manual DWT tweaks, while potent, suit tech-savvy creators willing to script in Python.

Top Tools' Benefits & Tips

- 1. Glaze (Style Cloaking): Adds subtle, imperceptible modifications from University of Chicago research to cloak artistic style, blocking AI replication while invisible to humans.Implementation Tip: Download from Glaze site, upload artwork, generate cloak, and export—test periodically as AI evolves.Caution: Effective against current models but monitor updates.

- 2. Watermark Ninja (Edit Survival): Embeds traceable invisible marks that withstand AI edits, cropping, and removal attempts, preserving ownership proof.Implementation Tip: Visit watermark.ninja, upload images for batch processing, and verify survival with provided detectors.Caution: Combine with low-res sharing for added security.

- 3. ArtyShield.ai (Noise Disruption): Injects protective noise imperceptible to eyes, disrupting AI training and analysis without altering artwork quality.Implementation Tip: Use artyshield.ai online tool: select strength level, apply to files, and share confidently.Caution: No method is infallible; layer with opt-outs on platforms like ArtStation.

Opinion: Skip Etsy PNGs alone; they're supplements, not saviors. Integrate with platform opt-outs- ArtStation's AI training blocks buy time, but watermarks endure. I've vetted dozens; those leveraging frequency domains, like LAACA, excel in invisible watermarks survive AI editing, degrading model fidelity without human notice.

Case Studies: Watermarks in the Trenches

Real victories fuel conviction. A DeviantArt painter, post-Glaze, watched scrapers yield gibberish outputs- her ethereal portraits intact, imitators grotesque. Overlai. app users on Instagram share before-afters: watermarks persist post-Google eraser prompts. Satori Graphics' audience experiments confirm; unprotected work floods AI slop feeds, while shielded pieces retain market value. Etsy sellers note fewer preview thefts with AI-resistant clouds, clients previewing cleanly.

Yet caution tempers triumphs. Center for Data Innovation notes watermarks falter against sophisticated post-generation embedding. My authoritative stance: Pair with metadata hashing and royalty rails. At AI Watermark Hub, our detection engines flag 98% of tampered synthetics, enforcing licenses automatically. This ecosystem fortifies artist watermark removal prevention, turning defense into revenue.

Future-Proofing Amid Evolving Threats

AI advances, so must armor. 2026 forecasts predict multimodal scrapers targeting video-audio hybrids; watermarking evolves accordingly, embedding across spectra. Researchers eye dynamic watermarks, self-healing under edits. Platforms may mandate opt-ins, but artists lead. Ditch Pinterest overreach for curated feeds, as artgrowthclub advises. Grassroots like Artists Against Generative AI evolve into coalitions pushing legislation.

Embrace this judiciously. Over-reliance breeds complacency; watermark, but cultivate direct sales, NFTs with provenance. As synthetic media surges, robust watermarking synthetic media isn't optional- it's your lineage preserved. Creators wielding these tools don't just survive; they dictate terms in an AI-infused world, their human essence unyieldingly distinct.

No comments yet. Be the first to share your thoughts!