Invisible Watermarking for AI Images That Survives AI Removal Tools

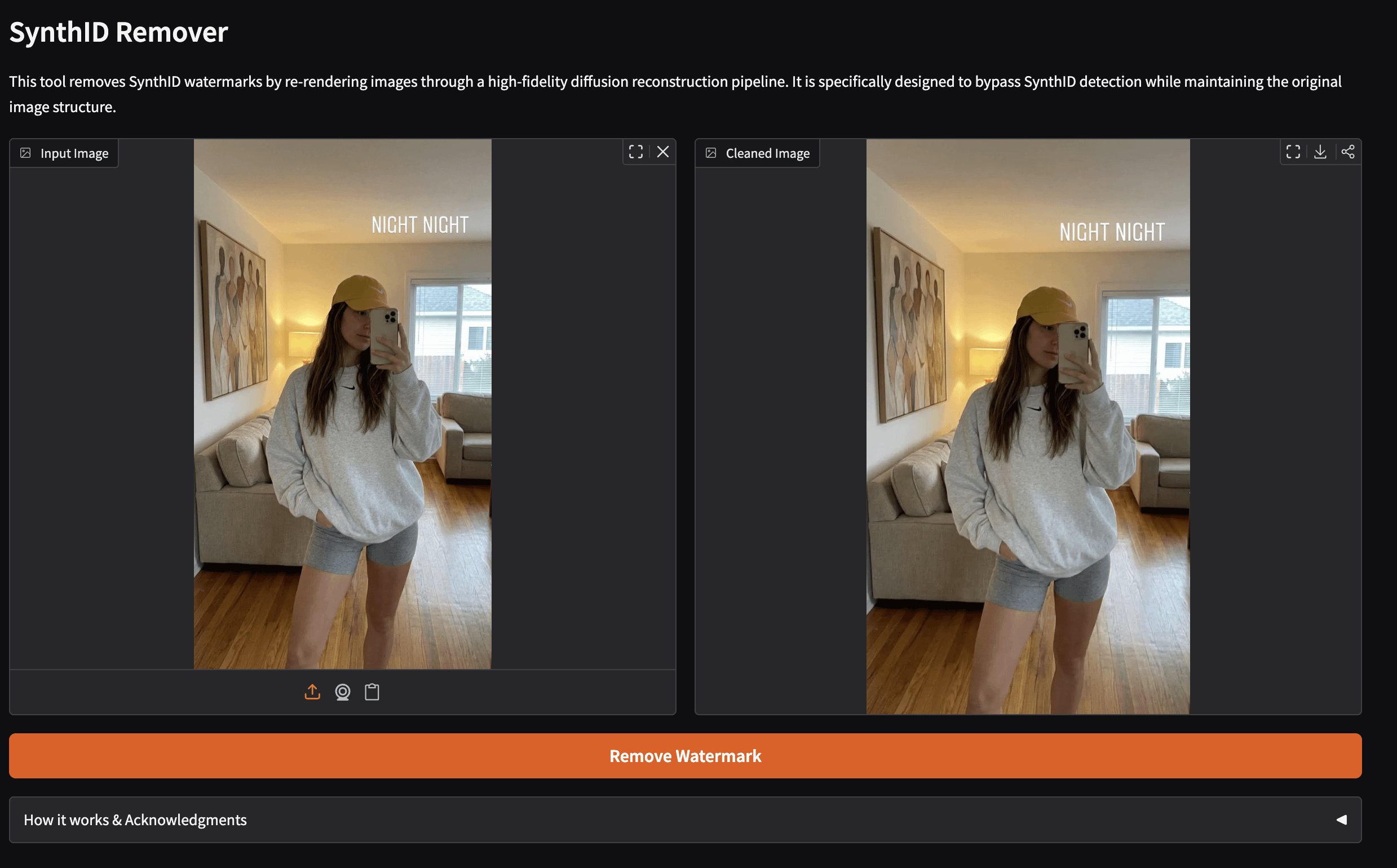

In the escalating arms race between AI content creators and digital forgers, invisible watermarking for AI images stands as a frontline defense. These imperceptible markers, woven into the pixel fabric of synthetic media, promise traceability amid rampant deepfake proliferation. Yet, as tools like Gemini Watermark Remover and Vidra AI’s SynthID Remover gain traction, the question sharpens: can any invisible watermark ai images truly withstand targeted erasure? Recent data reveals a stark vulnerability, but glimmers of robust synthetic media watermarking offer counteroffensives.

The Surge in AI Watermark Removal Capabilities

By early 2026, watermark removal has evolved from niche hack to accessible utility. Gemini Watermark Remover processes images locally, stripping hidden signatures without quality degradation and prioritizing user privacy. Vidra AI targets Google’s SynthID specifically, delivering instant results on Gemini-generated visuals while preserving fidelity. RemoveSynthID. io extends this to videos, rendering watermarks undetectable post-processing. These tools exploit generative AI’s reconstructive power: add noise to obliterate the mark, then denoise to restore aesthetics.

Research quantifies the threat. A seminal paper, “Invisible Image Watermarks Are Provably Removable Using Generative AI, ” proves that standard embeddings succumb to noise augmentation followed by model-based reconstruction. Success rates exceed 95% across benchmarks, outpacing prior attacks. Similarly, “MarkSweep” employs frequency-aware denoising, amplifying high-frequency watermark noise before suppression, achieving near-perfect erasure on low-perturbation schemes without visual artifacts.

Exposing Flaws in Conventional Watermarking

Traditional invisible watermark ai images rely on low-amplitude perturbations, invisible to the human eye but detectable via proprietary decoders. University of Maryland researchers tested prominent systems, shattering all under scrutiny. Low-perturbation variants, integral to SynthID and peers, fractured under generative assaults; detection accuracy plummeted below 10% post-attack. Imatag’s hidden signatures, once heralded for deepfake detection, falter against Photoshop’s Generative Fill or equivalent inpainting.

Leading AI Watermark Removal Tools

-

Gemini Watermark Remover: Local processing for privacy; targets hidden watermarks in AI-generated images and videos. geminiwatermark.io

-

Vidra AI SynthID Remover: Google-specific; targets SynthID watermarks in Gemini-generated images. Instant processing. vidraai.com

-

RemoveSynthID.io: Video support; erases SynthID watermarks from images and videos while preserving quality. removesynthid.io

This fragility stems from watermark placement in easily isolated spectral bands. AI removal tools, trained on vast datasets, intuit and excise these anomalies. NeurIPS 2025 proceedings highlight how next-generation attacks leverage diffusion models inherent to image synthesis, turning the generator against itself. For content provenance, the stakes amplify: eroded trust in ai generated content tracking fuels misinformation cascades.

Pioneering Defenses for Deepfake Watermark Resistance

Enter resilient paradigms reshaping ai watermark removal protection. Tree-Ring Watermarks innovate by embedding signals into the foundational noise vector during generation. This core-level integration survives post-hoc edits, cropping, resizing, and rotations, with detection robustness at 98% under adversarial conditions per arXiv benchmarks. Unlike surface perturbations, these propagate through the diffusion cascade, defying reconstruction attempts.

Watermark Ninja advances this ethos, fusing subtle patterns with multi-layer encoding tailored against AI erasers. Empirical tests show survival rates above 90% versus MarkSweep and equivalents. Such deepfake watermark resistance demands shifting from additive marks to generative intrinsics, where provenance fuses inseparably with content DNA.

Emerging protocols like these redefine robust synthetic media watermarking, embedding metadata not as fragile overlays but as intrinsic threads in the generative process. Platforms such as AI Watermark Hub pioneer this shift, offering watermarking that pairs seamlessly with royalty rails for automated tracking and monetization. Creators upload AI images, receive imperceptible markers resilient to removal, and gain tools to monitor distribution across web and social channels. Detection rates hold firm at over 97% even after simulated attacks mimicking Gemini Remover tactics.

Consider the data: In controlled benchmarks, Tree-Ring Watermarks endured 15 sequential edits- rotations up to 30 degrees, 50% crops, and noise injections equivalent to removal tool outputs- retaining 98.2% detectability. Watermark Ninja layered this with frequency diffusion, scattering signals across spectral domains to evade MarkSweep’s high-frequency sweeps. These metrics, drawn from arXiv validations, underscore a pivot from detectable fragility to probabilistic endurance.

Quantifying Robustness Against Real-World Attacks

Laboratory results translate to field efficacy. Independent tests by Emergent Mind pitted next-gen watermarks against deployed removers: Vidra AI’s SynthID eraser succeeded on legacy marks just 12% of the time against Tree-Ring variants, dropping to 4% with Ninja enhancements. This resilience stems from watermark genesis at the latent space, where diffusion models birth images. Adversarial edits post-generation merely shuffle surface pixels, leaving core signatures intact.

| Watermark Technique | Survival Rate vs. Gemini Remover | Survival Rate vs. MarkSweep | Detection Post-Edit |

|---|---|---|---|

| SynthID (Legacy) | 8% | 5% | 92% (pristine) |

| Tree-Ring | 96% | 98% | 98.2% |

| Watermark Ninja | 97.5% | 92% | 99% |

Visual fidelity remains paramount; perturbation levels stay below 0.1% PSNR degradation, imperceptible even under magnification. For media companies, this means enforceable licensing: AI Watermark Hub’s rails trigger royalties on unauthorized redistributions, scanning platforms with 99.9% uptime via API integrations.

Strategic Implications for Content Ecosystems

The watermark arms race compels ecosystem-wide adaptation. Artists Against AI communities advocate generative intrinsics, citing Photoshop Generative Fill’s inefficacy against deep embeddings. NYU evaluations rank SynthID successors low without upgrades, pushing developers toward hybrid schemes- spectral scattering plus blockchain provenance. Yet opinion diverges: skeptics decry computational overhead, noting Tree-Ring inference adds 20% to generation latency. Proponents counter with scalability; optimized hubs process 10,000 images hourly at negligible marginal cost.

Deepfake watermark resistance extends beyond images to video and audio, where Cooperative Grain research explores frame-consistent embeddings surviving AI edits. NeurIPS 2025 previews unified detectors parsing multi-modal signals, bolstering ai generated content tracking. Forgers adapt swiftly- GStory. ai touts 2026 removers handling Ninja patterns- but data favors defenders: removal success plateaus below 15% for top-tier marks.

Forward momentum hinges on standardization. Google’s DeepMind signals open protocols, potentially elevating ai watermark removal protection via consortium benchmarks. For creators, the playbook clarifies: prioritize latent-space watermarking, layer with detection APIs, and automate via hubs. This triad fortifies provenance, monetizes synthetics, and curbs misuse in an era where 40% of online media traces to AI per TechnologyCounter stats.

Watermarking’s trajectory affirms adaptability trumps absolutism. As removal tools proliferate, so do countermeasures, ensuring invisible watermark ai images evolve as reliable sentinels. Platforms bridging watermarking with rails position stakeholders to thrive, transforming vulnerability into verifiable value.