Invisible Watermarking for AI-Generated Videos: Track Royalties Without Visible Markers

In the flood of AI-generated videos reshaping content creation, creators face a stark reality: how do you prove ownership and collect royalties without slapping an ugly logo on your masterpiece? Enter invisible watermarking for AI-generated videos, a stealthy technology that embeds digital fingerprints right into the pixels, letting you track usage across the web while keeping visuals pristine. This isn’t sci-fi; it’s the pragmatic shield for synthetic media watermarking in 2026, where deepfakes blur truth and every viral clip could be yours gone rogue.

Picture this: you craft a stunning promo video with tools like Sora or Runway, release it into the wild, and suddenly it’s repurposed on TikTok, YouTube, even news sites. Without markers, royalties vanish into thin air. Invisible watermarks solve that by altering frames at a level the human eye ignores but detectors latch onto. Subtle tweaks to pixel values or frequency domains create a unique ID, resilient enough to survive compression, crops, and filters that plague traditional methods.

Core Principles Driving Effective Invisible Watermarks

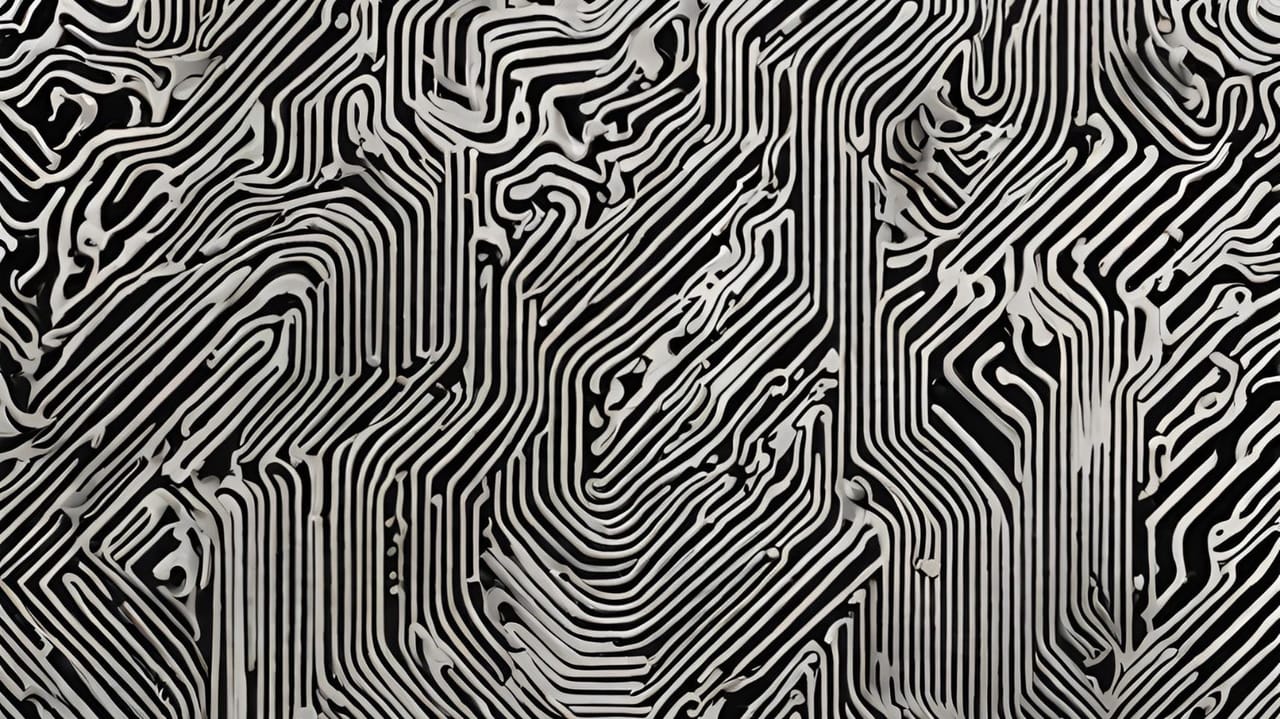

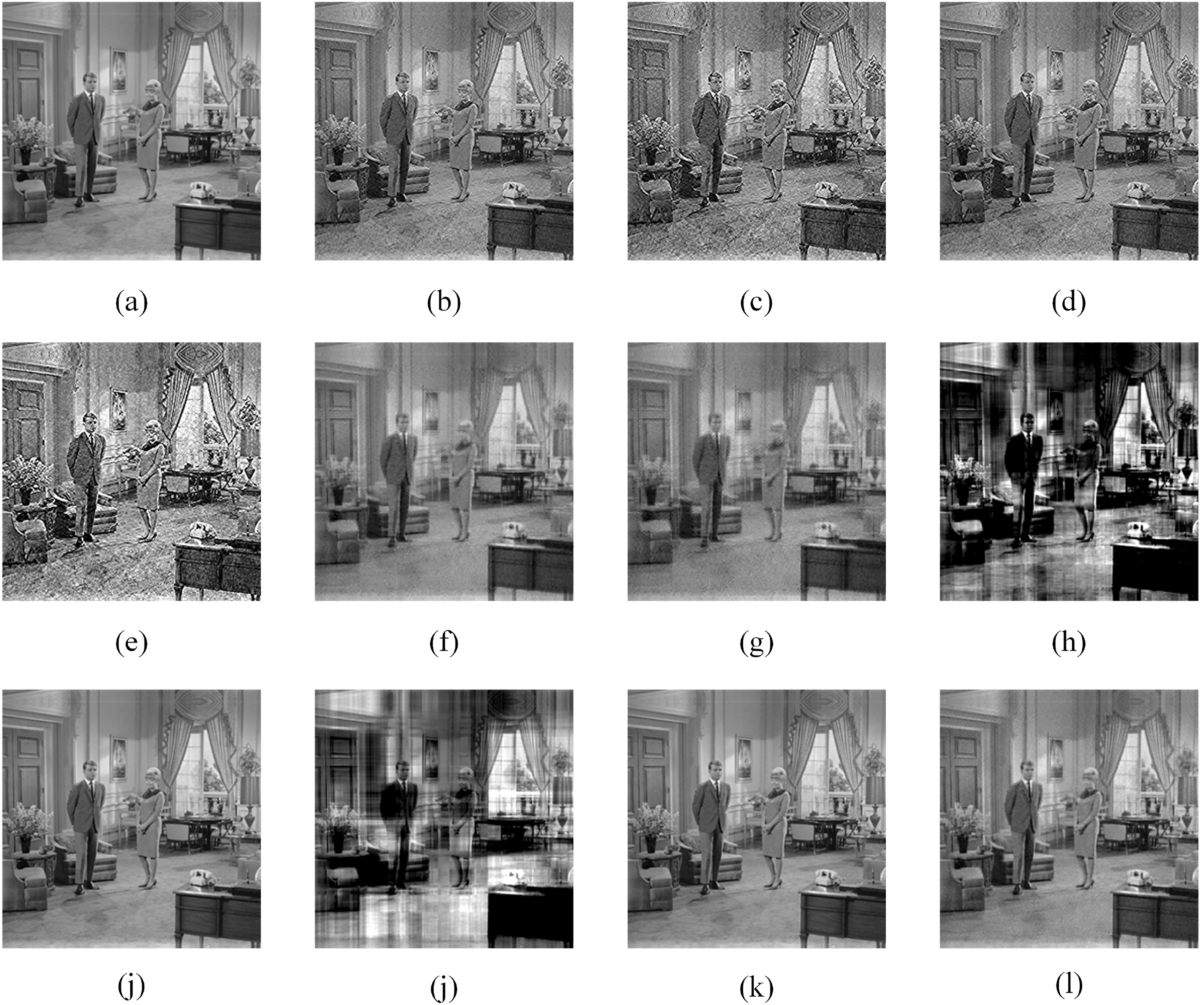

Robust invisible AI watermark video tech rests on three pillars: invisibility, robustness, and traceability. Invisibility demands zero visual disruption, preserving the media’s allure. Robustness means enduring real-world abuse, from heavy JPEG compression to AI re-edits. Traceability ties it back to you, encoding creator IDs, timestamps, or licensing terms for AI video royalty tracking.

Core Principles of Invisible Watermarking

-

Invisibility: Embeds imperceptible signals by subtly modifying pixel values, ensuring no visible artifacts and full preservation of video quality for seamless viewing.

-

Robustness: Withstands common manipulations like cropping, blurring, compression, and edits, as seen in tools like Meta’s Video Seal.

-

Traceability: Encodes unique identifiers for origin detection and ownership proof, enabling royalty tracking even after redistribution.

Meta’s engineering teams nailed this at scale by modulating pixel intensities imperceptibly across frames. It’s not magic; it’s math. Waveforms in audio analogs get similar treatment, but videos amp the challenge with motion and lighting shifts. Pragmatically, this lets platforms like AI Watermark Hub integrate seamlessly, scanning uploads to verify origins and trigger payouts.

Breakthrough Tools Reshaping Video Provenance

2024-2026 saw explosions in tools tailored for videos. Meta dropped Video Seal in December 2024, an open-source beast that watermarks during generation. It shrugs off blurring, cropping, and VP9 compression, proving deepfake protection watermark isn’t theoretical. Researchers countered with Safe-Sora, baking graphical codes into diffusion models via adaptive matching, ensuring watermarks stick through generations.

Tree-Ring Watermarks take it further, structuring signals in Fourier space for invariance to rotations or flips. These aren’t band-aids; they’re foundational shifts. Yet, I’m wary: Hive Moderation notes watermarks falter under extreme AI regeneration or lossy pipelines. Standardization lags, too, leaving Google’s SynthID clashing with Meta’s schemes. Still, for royalty rails, this trinity offers a workflow win: generate, embed, distribute, collect.

Royalties in the Age of Imperceptible Markers

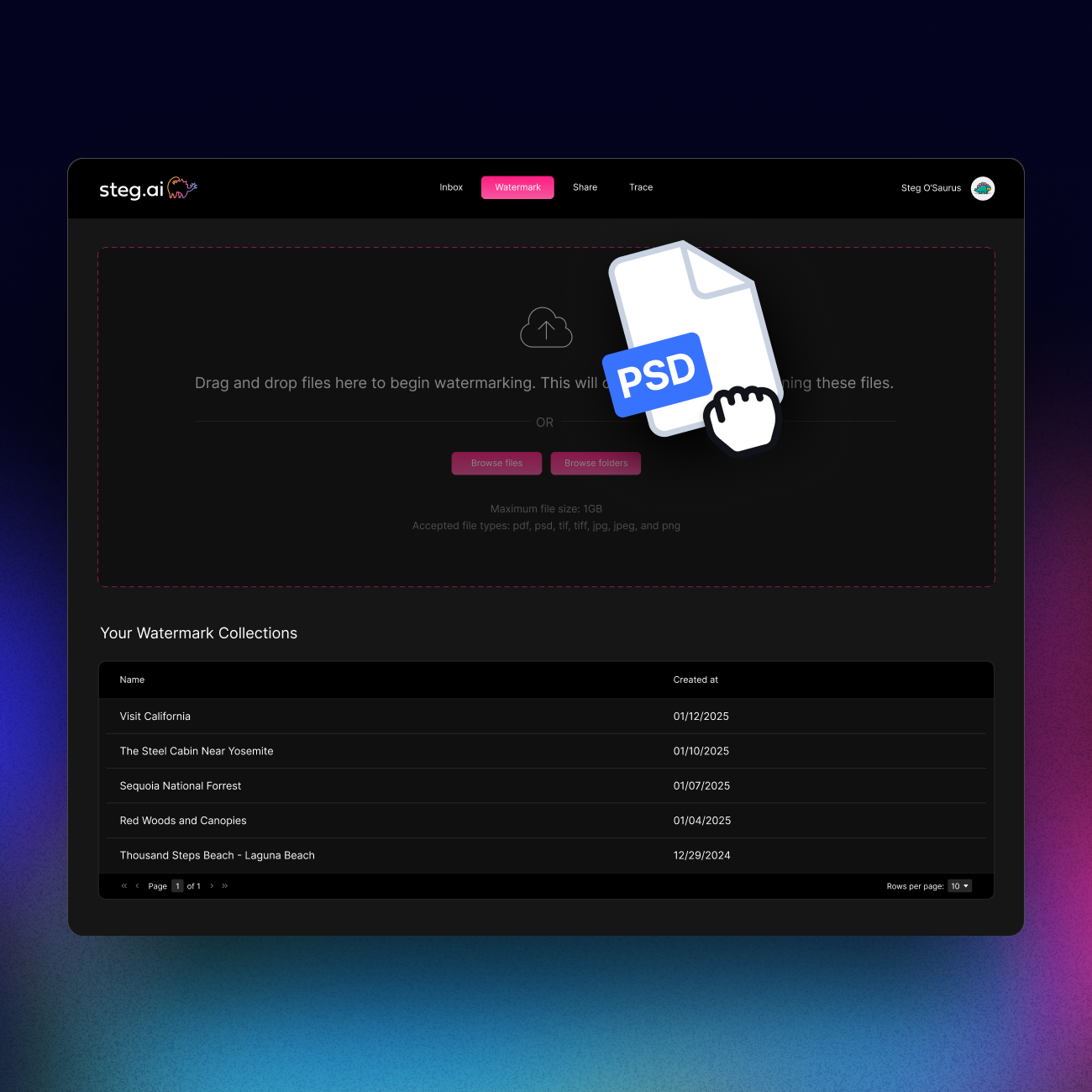

Monetizing AI videos demands more than detection; it requires ironclad tracking. Invisible markers enable imperceptible media markers that log every embed, share, and remix on blockchain-backed rails. Platforms auto-detect your watermark, enforce licenses, and route micro-payments. No more chasing pirates manually. India’s MeitY rules from February 2026 mandate such disclosures, signaling global momentum. But here’s the pragmatic caveat: US Copyright Office rulings nix protection for pure AI outputs sans human spark, muddying enforceability. Does your watermark hold legal weight? It hinges on hybrid creation.

These tools empower creators pragmatically. Video Seal integrates into pipelines effortlessly, while frameworks like Safe-Sora future-proof against Sora evolutions. Challenges persist, sure, detection arms races intensify as adversaries craft removers. Yet, the trajectory points to ubiquitous synthetic media watermarking, where royalties flow as reliably as streams.

To harness this power pragmatically, creators must navigate implementation hurdles head-on. Start by embedding watermarks at the generation stage, where tools like Video Seal slip into diffusion pipelines without a hitch. Post-generation fixes work too, but they’re less robust against savvy adversaries. Pair this with blockchain rails for immutable ledgers of usage, turning every detection into a royalty ping. AI Watermark Hub excels here, fusing ai video royalty tracking with scalable detection that scans platforms in real time.

Pitfalls and Pragmatic Counters to Watermark Weaknesses

Invisible watermarks aren’t invincible; heavy compression strips signals, and AI remixing tools like newer Sora variants can obliterate them. Hive’s analysis underscores this: no single method reigns supreme against all attacks. My take? Layer defenses. Combine Tree-Ring’s Fourier invariance with Safe-Sora’s generative embedding for hybrid resilience. Detection demands specialized decoders too, trained on watermark variants to outpace removers. Platforms counter by watermarking uploads proactively, creating ecosystems where deepfake protection watermark becomes default.

Comparison of Top Invisible Watermarking Tools

| Tool | Key Strength | Open Source | Robustness Score |

|---|---|---|---|

| Video Seal | Resilient to compression/cropping, blurring | Yes | High |

| Safe-Sora | Generative integration, robust to manipulations | No | High |

| Tree-Ring | Transformation invariance (cropping, rotations, flips) | No | High |

| SynthID | Multi-modal support (images, audio, text) | No | High |

This table highlights why no tool fits all; pick per pipeline. Video Seal shines for open-source accessibility, while SynthID’s breadth suits enterprises. Standardization efforts, like potential ISO specs brewing in 2026, could unify decoders, slashing compatibility woes.

From Research to Real-World Royalties: A Timeline

Tracing the arc reveals accelerating maturity. Early pixel tweaks evolved into model-native embeddings, propelled by regulatory nudges. India’s MeitY rules mandate disclosures, echoing calls from creatives for mandatory imperceptible media markers. US policymakers grapple with AI copyright voids, but watermark provenance bolsters fair use claims in hybrids.

These markers don’t just track; they authenticate narratives in an era where videos sway elections and brands. Dr. Ryan Ries notes deepfakes fool eyes, but watermarks pierce the veil technically. For royalties, imagine auto-audits: a clip hits 1M views, watermark triggers 0.01% micropayments pooled on rails. Creators reclaim value lost to virality without vigilance.

Challenges fuel innovation. Adversarial training hardens watermarks against removers, while cryptographic signatures layer metadata atop pixels. Meta’s scale proves feasibility; billions of frames watermarked imperceptibly. Yet, adoption lags among independents, wary of compute costs. Pragmatism dictates starting small: watermark portfolios now, scale with hubs like ours.

Legal evolution tips the scales. As courts recognize provenance chains, watermarks morph from tech gimmick to evidentiary gold. Pair with human oversight in creation, sidestepping pure-AI copyright pitfalls. Forward thinkers integrate across modalities, watermarking video-audio-text trios cohesively. Detection arms races rage, but layered synthetic media watermarking tilts toward creators. Platforms enforcing it gain trust, users authenticity. The streams of royalties? They’re flowing steadier, pixel by pixel, claim by claim. Platforms enforcing it gain trust, users authenticity. The streams of royalties? They’re flowing steadier, pixel by pixel, claim by claim.